AI Bubble Concerns: Veteran Manager Warns of Risk as OpenAI Faces Challenges

Veteran money manager George Noble is cautioning investors about the escalating risks within companies heavily reliant on the artificial intelligence (AI) boom, especially as OpenAI, a leading force in the sector, navigates internal turmoil and increasing competition. Noble advises a shift towards more stable investment havens.

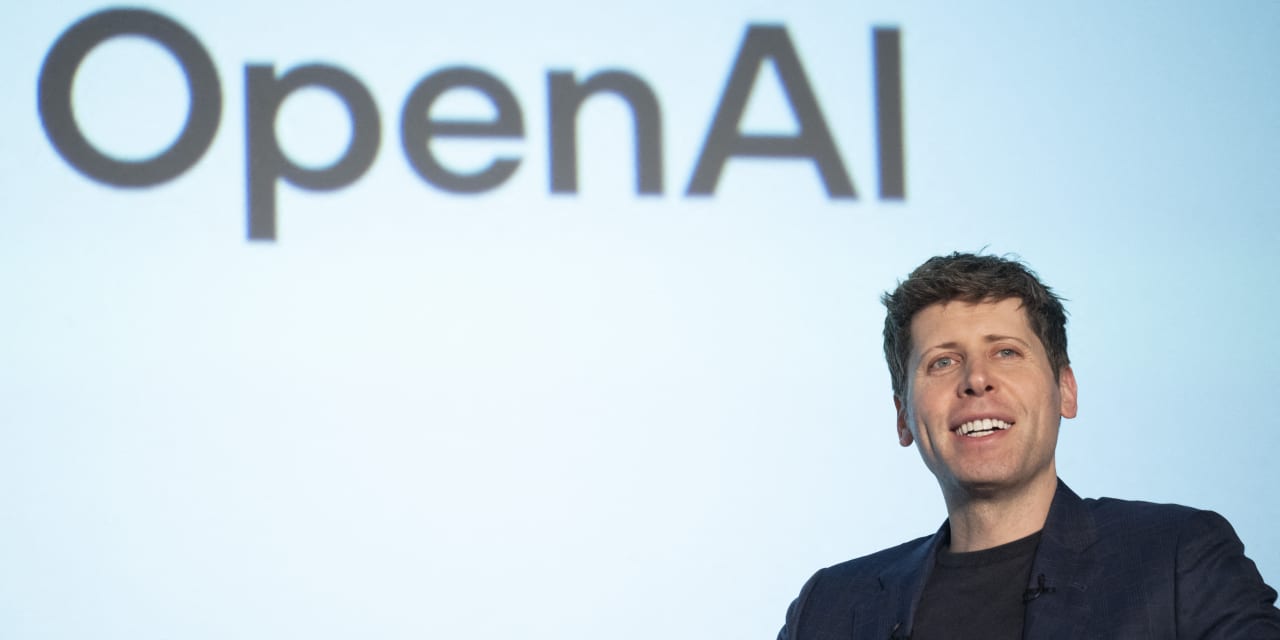

OpenAI’s Internal Struggles and Market Impact

Recent events at OpenAI, including the brief ousting and subsequent reinstatement of CEO Sam Altman, have exposed vulnerabilities within the company and raised questions about its long-term stability.These internal conflicts, coupled with growing competition from companies like Google and Meta, are creating a volatile environment for AI-adjacent investments. The initial shockwaves from the leadership dispute led to concerns about the future of OpenAI’s partnerships,most notably with Microsoft,which has invested billions in the company. Reuters reported extensively on the events.

the Risks of AI-Adjacent plays

Noble argues that many companies currently benefiting from the AI hype might potentially be overvalued.He points to the speculative nature of the market, where investor enthusiasm often outpaces fundamental business realities. “A lot of these AI-related stocks have run up dramatically, and the underlying businesses haven’t necessarily justified those valuations,” Noble stated in a recent interview. CNBC highlighted Noble’s concerns.

He specifically warns against companies that are primarily marketing themselves as “AI companies” without demonstrating significant revenue or a clear path to profitability. The risk, according to Noble, is a significant correction as the market matures and investors demand tangible results.

Where to Shelter Your Investments

So, where should investors turn to protect their portfolios? Noble recommends focusing on established, profitable companies with strong balance sheets and a history of weathering economic storms.He suggests considering the following:

- Large-Cap Value Stocks: Companies trading at a discount to their intrinsic value offer a margin of safety.

- Dividend-Paying Stocks: Regular dividend payments provide a steady income stream, even during market downturns.

- High-Quality Bonds: Government bonds and investment-grade corporate bonds can provide stability and preserve capital.

- Defensive Sectors: Industries like healthcare, consumer staples, and utilities tend to be less sensitive to economic cycles.

Noble also emphasizes the importance of diversification. “Don’t put all your eggs in one basket,” he advises. “Spread your investments across diffrent asset classes and sectors to reduce your overall risk.”

The Future of AI Investment

Despite his current caution, Noble doesn’t dismiss the long-term potential of AI. He believes that AI will continue to be a transformative technology, but he anticipates a period of consolidation and rationalization in the near term. He expects that only a handful of companies will ultimately emerge as dominant players in the AI space.

“The AI revolution is still underway, but it won’t be a straight line up,” Noble concludes. “There will be bumps along the road,and investors need to be prepared for volatility.”

Key Takeaways

- openai’s recent internal issues highlight the risks associated with investing in rapidly evolving AI companies.

- Many AI-adjacent stocks are possibly overvalued and vulnerable to a market correction.

- Investors should prioritize established, profitable companies with strong fundamentals.

- Diversification is crucial for mitigating risk in a volatile market.

- The long-term potential of AI remains significant, but a period of consolidation is likely.