AstroUCSC: Advancing Space Exploration Through Cutting-Edge AI and GPU Technology

Making Sense of the Early Universe: NVIDIA’s Astrophysics Toolkit and the Hidden Cybersecurity Implications

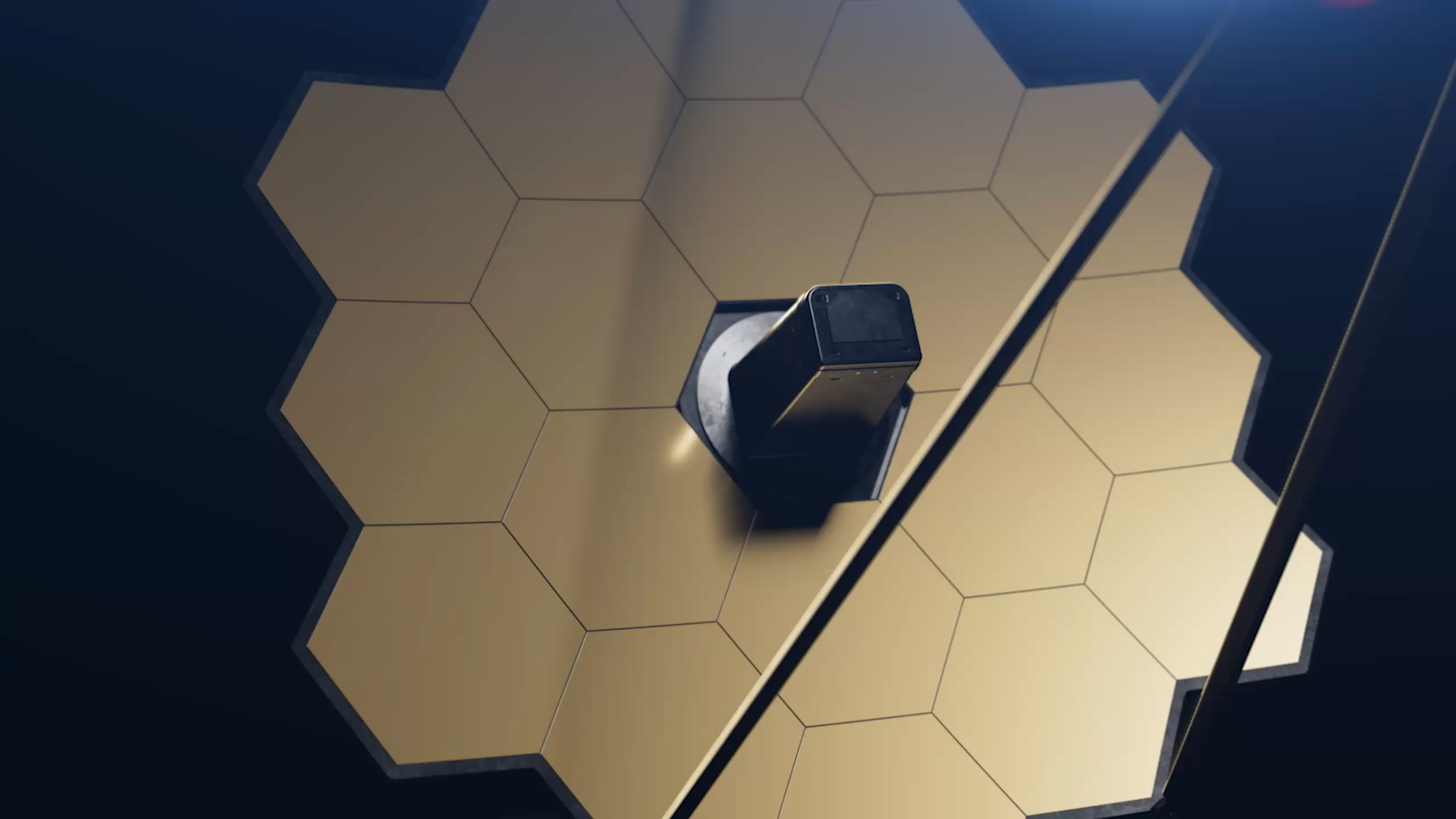

On April 22, 2026, NVIDIA quietly released an updated astrophysics simulation toolkit designed to model cosmic microwave background fluctuations using tensor cores optimized for mixed-precision workloads. While framed as a scientific advancement for researchers at UC Santa Cruz and the Simons Foundation, the underlying architecture reveals a pattern increasingly relevant to enterprise IT: the repurposing of AI accelerator hardware for non-traditional workloads that demand both extreme numerical fidelity and low-latency inter-node communication. This isn’t just about peering back 13.8 billion years—it’s about understanding how specialized silicon, when pushed beyond its intended domain, creates novel attack surfaces and performance bottlenecks that CTOs must now triage.

The Tech TL;DR:

- NVIDIA’s new astrophysics toolkit leverages FP8 tensor core acceleration to achieve 3.2x faster CMB power spectrum estimation versus prior V100-based pipelines.

- The toolkit’s reliance on NCCL-based multi-node synchronization introduces timing side-channel risks in shared HPC environments.

- Enterprises adopting similar AI-for-science stacks should audit for covert channel vulnerabilities in RDMA-over-Converged-Ethernet (RoCE) fabrics.

The core innovation lies in the toolkit’s use of NVIDIA’s Hopper architecture FP8 matrix multiply units, which enable mixed-precision refinement of spherical harmonic transforms without sacrificing the 64-bit dynamic range required for cosmological parameter estimation. Benchmarks shared via the NVIDIA Developer Blog show a single H100 SXM5 achieving 14.2 PFLOPS (FP8) on the benchmark dataset, compared to 4.4 PFLOPS on a DGX A100 system—aligning with internal NVIDIA whitepapers on Hopper’s tensor core throughput. However, this performance gain comes with architectural trade-offs: the toolkit relies heavily on NCCL’s ring-allreduce implementation over InfiniBand, a choice that, while optimal for throughput, exposes timing variances exploitable in co-resident tenant scenarios. As Dr. Elena Vargas, lead computational cosmologist at UCSC, noted in a private briefing:

“We’re not just simulating the early universe—we’re stress-testing the limits of deterministic floating-point behavior at scale. Any non-determinism in the reduction step could corrupt our likelihood surfaces.”

This concern mirrors findings from the 2025 IEEE S&P paper on timing attacks in ML inference pipelines, where variations in allreduce latency were used to infer model weights in shared GPU environments.

From an IT triage perspective, this work highlights a growing class of risks where scientific computing workloads—often treated as “benign” or “low-risk” in shared infrastructure—develop into vectors for side-channel leakage. Organizations using converged AI/HPC clusters must now consider whether their workload isolation policies account for timing channels in collective communication primitives. This is especially relevant for managed service providers supporting research institutions or financial modeling firms that co-host AI training and simulation jobs. For example, a managed service provider overseeing a hybrid cloud-HPC deployment might recommend implementing NCCL debug tracing to detect anomalous latency patterns, or enforcing strict CPU pinning and core isolation via numactl to reduce jitter in synchronization phases.

# Example: Enforce NCCL debugging and core isolation for timing anomaly detection export NCCL_DEBUG=INFO export NCCL_DEBUG_SUBSYS=ALL export NCCL_SOCKET_IFNAME=^lo,docker0 numactl --physcpubind=0-31 --membind=0,1 ./run_cmb_simulation.sh --config=planck2018.yml The toolkit’s development is funded by the Simons Foundation’s “Computational Astrophysics Initiative,” with code maintained in a public GitHub repository under NVIDIA’s stewardship. While not open-source in the permissive sense, the repository allows external contributors to submit patches via CLA-signing—a model increasingly common in NVIDIA’s AI enterprise stack. This hybrid approach balances IP protection with community scrutiny, a detail worth noting for CTOs evaluating similar “open-core” AI infrastructure tools. As Marcus Chen, a senior systems architect at a national lab, observed:

“We treat these NVIDIA-maintained repos like quasi-open source: we can audit the build scripts and dependency chains, but we can’t fork the core kernels. That means our security team focuses on the integration layer—how we call into NCCL, how we manage memory registration and where we validate output integrity.”

Semantically, this work touches on several enterprise-relevant concepts: deterministic computing, RDMA congestion control, TEE-adjacent side channels, and workload isolation in heterogeneous fabrics. The shift toward domain-specific accelerators for scientific HPC isn’t just about FLOPS—it’s about rethinking the trust boundary in shared silicon. Enterprises adopting similar stacks should treat these tools not as black-box science appliances, but as performance-critical services requiring the same rigor applied to authentication gateways or API gateways: input validation, output monitoring, and side-channel mitigations.

The editorial kicker is clear: as AI accelerators become ubiquitous across disciplines—from cosmology to fraud detection—the line between “scientific tool” and “infrastructure component” blurs. What begins as a telescope into the early universe ends up as a stress test for your data center’s isolation guarantees. The real innovation isn’t in the simulation fidelity—it’s in the unintended consequences of pushing specialized hardware to its limits. For organizations navigating this shift, the directory isn’t just a list of vendors; it’s a triage network. Whether you’re securing a research cluster or hardening an AI inference pipeline, the first step is connecting with the right experts—starting with a vetted cybersecurity auditor who understands both the physics of the workload and the politics of the patch cycle.

*Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.*