Reproducibility and robustness of economics and political science research

A massive, unprecedented global collaboration involving over 400 researchers from 200 institutions has concluded that a significant portion of foundational economics and political science research fails to meet modern reproducibility standards. Released today, April 1, 2026, this landmark study exposes critical vulnerabilities in the data driving public policy, prompting an urgent call for independent data auditing firms and stricter compliance protocols across government agencies in Ottawa, Washington, and Brussels.

The implications are staggering. For decades, governments have built fiscal policies, trade agreements, and social safety nets on academic papers that, upon rigorous re-examination, simply do not hold up. This is not merely an academic squabble. We see a structural integrity issue for the global economy. When the math behind a tax incentive or a healthcare subsidy is flawed, the cost is paid by the taxpayer.

The Scale of the “Replication Crisis”

The sheer magnitude of this undertaking distinguishes it from previous efforts. The author list reads like a roll call of the world’s most prestigious economic departments: from the University of Oxford and the World Bank to the University of Ottawa and the Stockholm School of Economics. This was not a small team checking a few graphs; it was a stress test of the discipline itself.

Leading the charge were heavyweights like Edward Miguel of UC Berkeley and Anna Dreber of the Stockholm School of Economics, alongside a vast network of contributors from the Institute for Replication. Their findings suggest that the “publish or perish” culture has incentivized speed over accuracy, leaving a trail of fragile data in its wake.

“We are no longer just questioning individual papers; we are questioning the infrastructure of knowledge production itself. If a policy cannot be replicated, it cannot be trusted.”

This sentiment was echoed in a statement released this morning by Dr. Elena Rossi, a Senior Fellow at the European Centre for Policy Integrity in Brussels, who was not involved in the study but has reviewed the preliminary data. “We are seeing a shift where municipal and federal governments are realizing they lack the internal capacity to vet the research they consume,” Rossi noted. “This creates a massive market demand for third-party verification services.”

Geo-Local Impact: From Ottawa to Silicon Valley

While the study is global, the fallout will be felt acutely in policy hubs. In Ottawa, where the Institute for Replication is headquartered, local public policy consultants are already reporting a surge in inquiries from federal departments. The Canadian government, a major funder of social science research, faces immediate pressure to audit its own evidence-based decision-making frameworks.

Similarly, in Washington D.C., the ripple effects are touching the World Bank and the IMF. These institutions rely heavily on the very types of studies scrutinized in this report. The risk is that development loans and austerity measures were predicated on statistical anomalies rather than robust causal links.

The technology sector is not immune. In California, where the Berkeley Initiative for Transparency in the Social Sciences operates, tech giants utilizing behavioral economics for algorithm design are now facing scrutiny. If the underlying psychological models are not reproducible, the AI systems built upon them may harbor systemic biases.

The Cost of Fragile Data

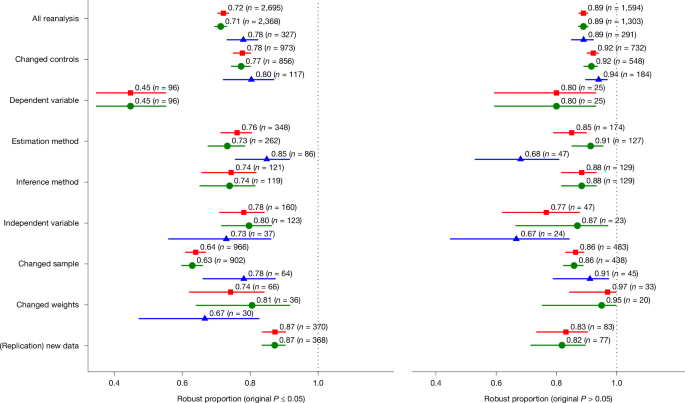

To understand the gravity of the situation, one must look at the divergence between traditional publication standards and the new reproducible benchmarks established by this consortium.

| Metric | Traditional Standard (Pre-2020) | New Reproducible Standard (2026) |

|---|---|---|

| Data Availability | Often restricted or “available upon request” | Mandatory open access repositories |

| Code Verification | Rarely peer-reviewed | Independent computational audit required |

| Sample Size | Often underpowered for complex claims | Pre-registered power analysis mandatory |

| Policy Impact | Immediate implementation based on p-values | Delayed implementation pending replication |

The table above highlights the paradigm shift. The old model allowed for “p-hacking”—manipulating data until a significant result appeared. The new model, championed by the hundreds of authors in this study, demands transparency. But transparency requires expertise. It requires professionals who can read code, understand econometrics, and navigate the legalities of data privacy.

The Professional Response: A New Industry Emerges

This is where the private sector must intervene. The academic world has identified the problem, but the business world must provide the solution. We are witnessing the birth of a specialized industry focused on research integrity.

Universities and think tanks are increasingly turning to specialized legal counsel to navigate the intellectual property issues surrounding open data. Simultaneously, corporations are hiring forensic data analysts to ensure their internal R&D departments are not repeating the mistakes of the academic world.

For local governments, the path forward involves contracting with firms that specialize in evidence synthesis. It is no longer enough to hire a generalist lobbyist; municipalities need partners who can validate the studies supporting infrastructure projects or zoning changes.

Looking Ahead: The Integrity Dividend

The release of this study on April 1, 2026, should not be viewed as a scandal, but as a correction. It is a painful but necessary recalibration. The “Integrity Dividend”—the long-term economic gain from making decisions based on solid facts—will eventually outweigh the short-term cost of discarding flawed research.

However, the transition period will be volatile. Expect legislative hearings in the US and EU regarding research funding. Expect lawsuits where policies based on debunked studies are challenged. And expect a rush to secure qualified professionals who can separate signal from noise.

As we move forward, the question is not whether the research was wrong, but how quickly You can fix the system that produced it. For those navigating this complex new landscape, the difference between liability and leadership will often arrive down to one thing: who you trust to verify the truth. In an era of data uncertainty, verified compliance experts are no longer a luxury; they are the only shield against the cost of being wrong.