Breaking Down NASA’s Big Return to the Moon

Artemis 2: A Legacy Monolith vs. The Modern Space Stack

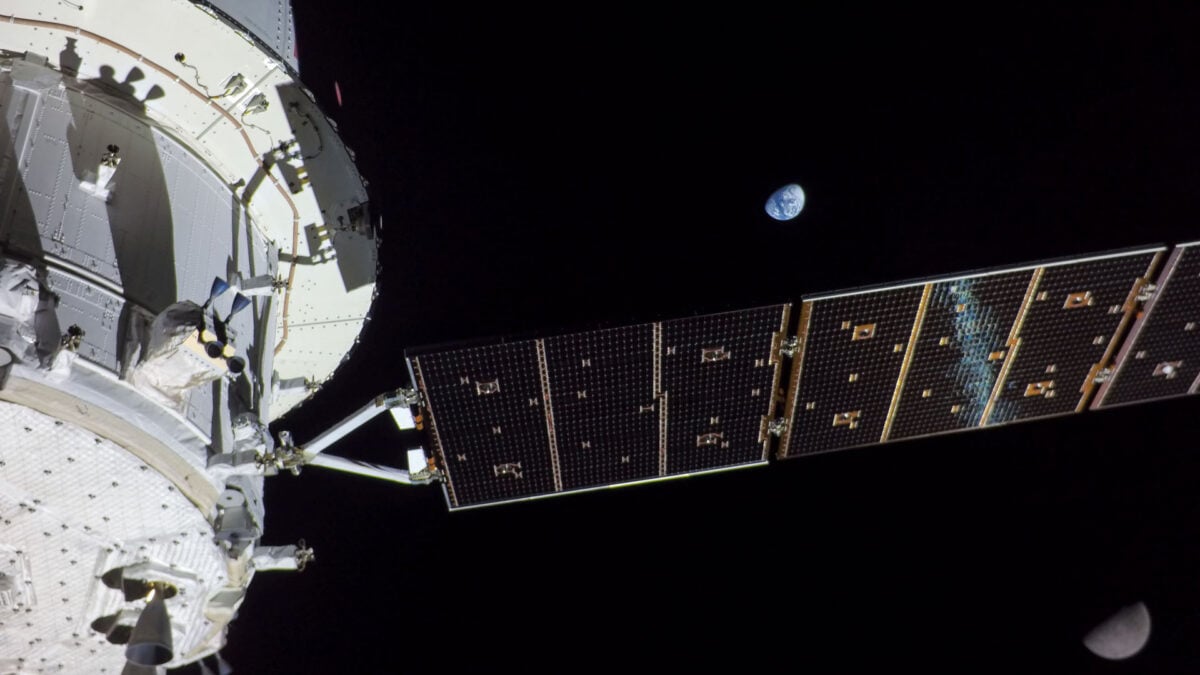

We are days away from the Artemis 2 launch window, a mission that represents less of a technological leap and more of a massive refactoring project for a fifty-year-old codebase. Whereas the PR machine hypes the “return to the Moon,” a systems architect looks at the Space Launch System (SLS) and sees a monolithic architecture running on deprecated libraries. With a per-launch cost hitting $4.2 billion and a reliance on RS-25 engines salvaged from the Space Shuttle era, NASA is betting the farm on hardware that predates the modern internet. This isn’t just about rocket science; it’s a case study in technical debt, latency management, and the risks of running critical infrastructure without a continuous integration pipeline.

The Tech TL;DR:

- Legacy Dependency: The SLS core stage relies on RS-25 engines from the 1980s, creating a high-maintenance bottleneck compared to modern methane-fueled alternatives.

- Comms Latency: A 50-minute communication blackout behind the Moon necessitates fully autonomous fault-tolerant systems, raising the stakes for onboard cybersecurity.

- Cost Efficiency: At $4.2 billion per flight, the SLS lacks the economic scalability of reusable architectures, mirroring the inefficiencies of on-premise data centers vs. Cloud-native solutions.

The fundamental issue with Artemis 2 is architectural rigidity. The SLS is a “waterfall” development project in an industry rapidly shifting to “agile” iterative testing. The rocket generates 8.8 million pounds of thrust, but that power comes from burning 733,000 gallons of super-cooled liquid hydrogen and oxygen—a propellant mixture notorious for being difficult to contain and manage. During the wet dress rehearsals, ground crews battled persistent hydrogen leaks, a classic symptom of integration failure in legacy systems. In the software world, this is equivalent to trying to patch a critical zero-day vulnerability in a kernel that hasn’t been updated since the Clinton administration. The friction here is palpable; maintaining cryogenic seals on 1970s-era hardware introduces variables that modern composite materials and methane fuels (used by competitors like SpaceX) have largely engineered out.

For enterprise CTOs watching this unfold, the lesson is clear: clinging to legacy infrastructure creates exponential maintenance costs. Just as NASA struggles with the supply chain for Shuttle-era parts, corporations holding onto on-premise mainframes face similar scalability walls. If your organization is grappling with the technical debt of migrating from monolithic legacy systems to modular cloud architectures, engaging with specialized legacy system migration specialists is no longer optional—it’s a survival metric. The Artemis program proves that while you can keep an old system running, the cost of uptime eventually outweighs the cost of replacement.

Beyond the propulsion stack, the mission profile introduces a severe latency challenge. As Orion loops behind the lunar far side, the spacecraft will endure a communication blackout lasting up to 50 minutes. During this window, the vehicle operates in a “Zero Trust” environment where no external commands can be verified. The onboard avionics must handle trajectory corrections and fault detection autonomously. This mirrors the edge computing challenges faced by IoT deployments in remote locations where cellular backhaul is unreliable. The Orion service module, equipped with 33 engines for attitude control, essentially functions as a distributed system that must self-heal without human intervention.

This autonomy requirement highlights a critical cybersecurity vector. If the onboard flight software contains unpatched vulnerabilities or logic bombs, there is no “remote wipe” or emergency patch deployment possible during the blackout. The attack surface is the code itself. Security teams analyzing deep-space missions should glance at how NASA implements hardware-rooted trust and immutable logs. For companies deploying autonomous fleets or edge devices, the stakes are similar. You need cybersecurity auditors who specialize in embedded systems and offline threat modeling to ensure your edge nodes don’t become liabilities when the connection drops.

To visualize the telemetry checks required for such a mission, consider a simplified health-check routine that an engineer might run against the Orion avionics bus before committing to the trans-lunar injection. While the actual telemetry is proprietary, the logic follows standard API verification patterns:

# Simulated Orion Avionics Health Check via CLI # Endpoint: /api/v1/telemetry/orion-core curl -X Acquire "https://telemetry.nasa.gov/api/v1/status/orion-core" -H "Authorization: Bearer $MISSION_TOKEN" -H "Content-Type: application/json" --data '{ "check_type": "pre_injection", "modules": ["guidance_nav", "thermal_control", "life_support"], "thresholds": { "radiation_tolerance": 30, "temp_max_f": 3000 } }' # Expected Response: 200 OK # { # "status": "GREEN", # "latency_ms": 45, # "redundancy_active": true, # "warnings": ["Hydrogen_Leak_Sensor_3: Drift_Detected"] # } The financial architecture of Artemis 2 is equally concerning from an ROI perspective. The Office of Inspector General reported a cost of $4.2 billion per flight. Contrast this with the emerging reusable launch vehicles that aim to drive marginal costs down to the thousands. In tech terms, SLS is a custom-built, single-tenant server farm, while the new wave of commercial spaceflight is serverless computing. The inefficiency is staggering. When you factor in the 19,420-day gap since humans last left low Earth orbit, it becomes evident that the bottleneck wasn’t just physics; it was procurement and contract models that discouraged innovation.

The Stack Comparison: SLS Block 1 vs. Next-Gen Heavy Lift

To understand why the industry is pivoting, we have to look at the specs. The following breakdown compares the current Artemis 2 launch vehicle against the emerging standards in heavy-lift architecture. Note the disparity in reusability and propellant efficiency.

| Architecture Spec | SLS Block 1 (Artemis 2) | Next-Gen Heavy Lift (e.g., Starship) |

|---|---|---|

| Propellant Type | Liquid Hydrogen / Liquid Oxygen | Liquid Methane / Liquid Oxygen |

| Reusability | Expendable (Single-Use) | Fully Reusable |

| Thrust at Liftoff | 8.8 Million lbs | ~16+ Million lbs (Target) |

| Est. Cost Per Launch | $4.2 Billion | <$10 Million (Target) |

| Dev Cycle | Waterfall / Decades | Agile / Iterative |

The thermal constraints during reentry further illustrate the engineering tightrope. Orion’s heat shield must withstand 3,000 degrees Fahrenheit while decelerating from Mach 32 to zero in 20 minutes. Lockheed Martin, the prime contractor, asserts the shield can handle up to 5,000 degrees, but the charring observed during the uncrewed Artemis I mission suggests marginal safety buffers. In software engineering, this is akin to running a database at 99% CPU utilization with no headroom for spike traffic. It works until it doesn’t. The reliance on a single-point-of-failure thermal protection system without active cooling redundancy is a risk profile that would be rejected in a standard SOC 2 compliance audit for critical infrastructure.

Artemis 2 is a triumph of persistence, but a cautionary tale for system design. It proves that you can force legacy hardware to perform miracles, but the cost—in both capital and risk—is unsustainable for long-term scaling. As we move toward the Artemis 4 landing mission, the industry must decide whether to continue patching the monolith or to refactor the entire launch stack. For IT leaders, the parallel is undeniable: innovation requires the courage to deprecate the old, even if it’s the system that got you to the moon in the first place. If your infrastructure strategy lacks a clear path to modernization, now is the time to consult with IT strategy consultants to avoid becoming the SLS of your own industry.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.