YouTube Auto-Dubbing: My Bizarre AI Voice Experience & Disappearance

YouTube’s Phantom Dubbing: A Post-Mortem on Generative Audio Rollouts

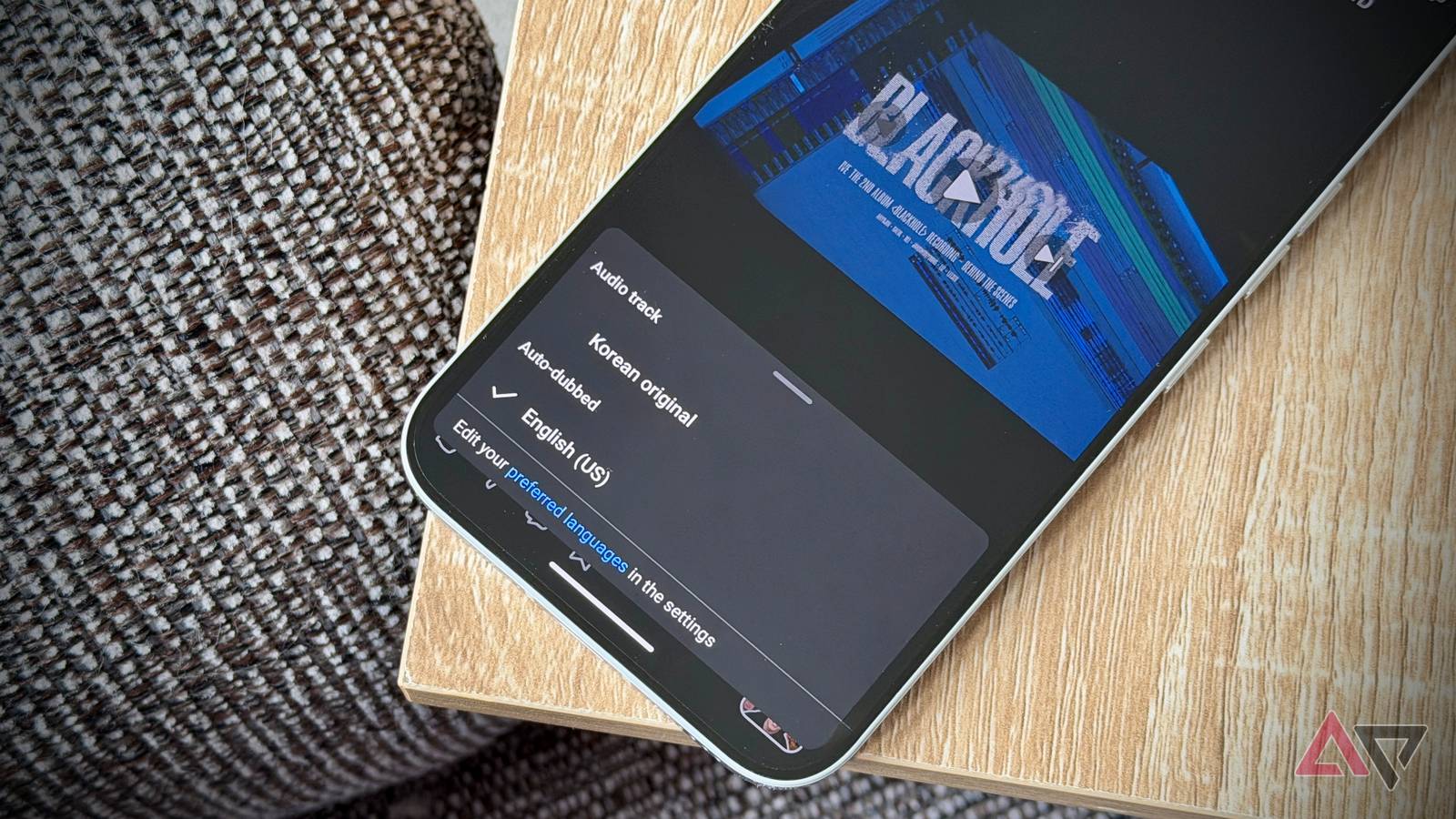

The feature lived for exactly one production cycle. Users reported a surreal experience where Korean audio streams were replaced by generative English clones in real-time, only for the capability to vanish from the client-side build without a changelog entry. This wasn’t a bug. it was a Canary deployment that hit a regulatory wall. As of March 2026, YouTube’s Auto-dubbing represents a critical case study in the friction between generative AI latency constraints and biometric privacy compliance.

The Tech TL;DR:

- Deployment Status: Feature flagged off globally following A/B testing; likely due to voice biometric consent violations.

- Underlying Stack: Powered by Gemini Ultra audio modules; introduces 400-800ms inference latency on edge devices.

- Security Implication: Unauthorized voice cloning creates liability for platforms; requires immediate cybersecurity audit services for AI compliance.

When the Auto-dubbing toggle appeared in the settings menu, it bypassed the standard content creator approval workflow. This suggests a server-side override intended to test engagement metrics before securing content owner consent. From an engineering perspective, the latency introduced by real-time voice synthesis is non-trivial. Processing audio streams through a large language model (LLM) pipeline requires significant NPU utilization. On mobile SoCs, this thermal load competes with video decoding, often resulting in frame drops or audio desynchronization.

The Biometric Liability Vector

Voice is a biometric identifier, protected under regulations like BIPA in Illinois and the emerging EU AI Act. By synthesizing a creator’s voice without explicit opt-in, the platform risks classifying the output as unauthorized biometric data processing. The uncanny valley effect reported by users—where the tone was preserved but the timbre was artificial—indicates the model was prioritizing semantic translation over voice fidelity to avoid deepfake detection triggers.

This creates a vulnerability surface for bad actors. If the API endpoint for dubbing is exposed, it could be abused to generate consent-free voice clones for social engineering attacks. Organizations managing enterprise communication channels cannot afford this ambiguity. Security teams are now advised to engage cybersecurity auditors specifically trained in AI governance to scan for unauthorized generative features in their supply chain.

“The intersection of artificial intelligence and cybersecurity is no longer theoretical. We are seeing federal regulators treat unauthorized voice synthesis as a data breach event. Companies need a national reference provider network to validate their AI deployment standards.”

The rapid disappearance of the feature aligns with the hiring trends seen in major tech firms. Job postings for roles like Director of Security | Microsoft AI indicate a shift where security leadership is embedded directly into AI product teams, not just infrastructure. This structural change suggests that future rollouts will face stricter internal red-teaming before reaching production.

Latency and Inference Costs

Real-time dubbing is not merely a translation task; It’s a multi-stage pipeline involving speech-to-text, neural machine translation, and text-to-speech (TTS) with emotion preservation. Each stage adds latency. In a standard CDN distribution model, adding an inference layer at the edge increases cost per stream. If the feature was enabled by default, the compute bill for Google would have spiked exponentially.

Developers integrating similar features must account for these overheads. Below is a cURL example demonstrating how to check for feature flags in a video playback API, a standard practice for debugging unexpected client-side behavior:

curl -X Receive "https://api.youtube.com/v3/videos?id=VIDEO_ID&part=snippet&fields=items(snippet(localization))" -H "Authorization: Bearer YOUR_ACCESS_TOKEN" -H "Accept: application/json" | jq '.items[0].snippet.localization'If the localization object returns unexpected language codes without creator metadata, it indicates a server-side override. Enterprise IT departments monitoring bandwidth usage should look for spikes in outbound audio processing requests that correlate with video playback sessions.

The Compliance Bottleneck

The sudden retraction of the feature highlights the immaturity of current AI governance frameworks. While the technology functions, the legal framework lags. Consulting firms are seeing increased demand for cybersecurity consulting firms that specialize in AI ethics and compliance. The risk is not just technical failure; it is reputational damage and regulatory fines.

For the finish user, the experience was described as “dystopian.” This user sentiment is a leading indicator of adoption failure. Technology that erodes trust in media authenticity faces an uphill battle. The “Babel fish” analogy used by early testers underscores the disorientation caused by breaking the link between visual cues and audio identity. In security terms, Here’s a breakdown of integrity.

Future Trajectory and Mitigation

Expect future iterations to require explicit cryptographic signing from content creators. A watermarking standard, such as C2PA, will likely grow mandatory for any generative audio overlay. Until then, the feature remains in a legal limbo. For organizations relying on YouTube for training or communications, the recommendation is to disable auto-translations and rely on human-verified subtitle tracks.

The industry is moving toward a model where AI capabilities are gated behind compliance checks. The existence of entities like the AI Cyber Authority signals that a third-party verification layer is emerging. Companies deploying generative features without this oversight are operating in a high-risk zone. The phantom dubbing feature was not just a glitch; it was a warning shot regarding the cost of unchecked AI integration.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.