Xcode News: Agentic Coding, Swift Student Challenge & More | Apple Developer

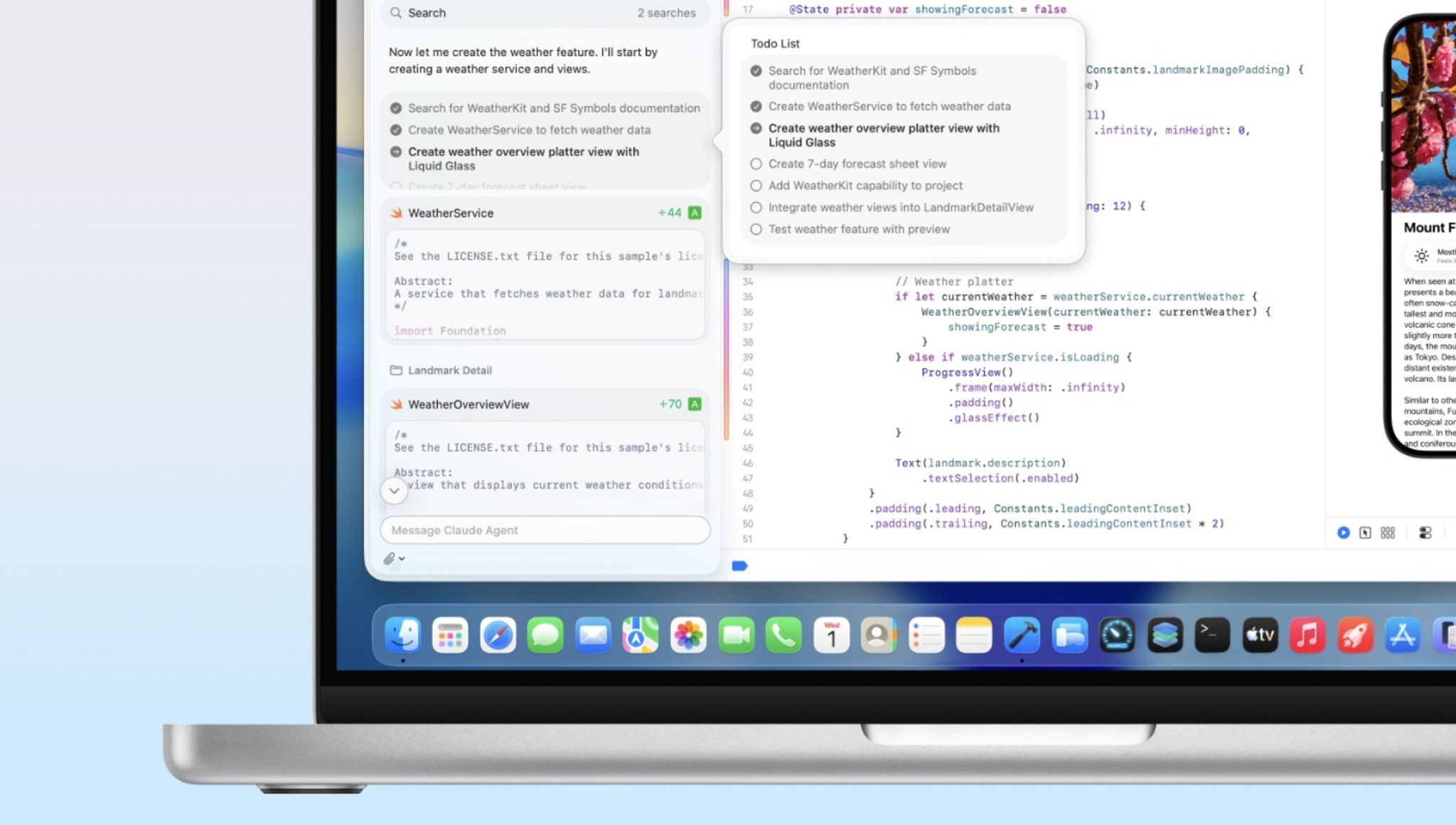

Apple’s Agentic Xcode: Efficiency Gain or Supply Chain Nightmare?

Apple’s February 2026 Developer update pushes agentic coding directly into Xcode. While the keynote slides promise velocity, the underlying architecture introduces significant supply chain vulnerabilities. For enterprise CTOs, this isn’t a feature update; it’s a risk assessment trigger.

The Tech TL;DR:

- Agentic workflows automate dependency management but increase the blast radius of compromised packages.

- Local NPU processing mitigates data exfiltration risks but lacks the context window of cloud-based LLMs.

- Adoption requires immediate engagement with cybersecurity audit services to validate AI-generated code integrity.

The Agentic Attack Surface

The core promise of agentic coding is autonomous task completion. The system doesn’t just suggest lines; it executes refactors, manages packages, and writes tests. This shifts the developer role from writer to reviewer. The latency benefit is clear—removing context switching saves hours per sprint. But, the security model relies on a zero-trust assumption that rarely holds in practice. When an AI agent has write access to the repository and network access to package registries, the potential for lateral movement expands exponentially.

Traditional static analysis tools struggle with AI-generated logic patterns. The code compiles, passes unit tests, and yet may contain subtle logic bombs or inefficient recursion loops that only manifest under production load. We are seeing a shift from syntax errors to semantic vulnerabilities. The cybersecurity audit services market is already pivoting to address this, distinguishing formal assurance from general IT consulting. Organizations cannot rely on standard linting; they need behavioral analysis of the agent itself.

Supply Chain & Dependency Risks

Agentic systems often resolve dependencies automatically. In a hurry to meet sprint goals, an agent might pull in a library with a known CVE because it satisfies the immediate functional requirement without checking the long-term maintenance status of the package. This accelerates technical debt accumulation. The risk isn’t just malicious code; it’s abandoned middleware that becomes an unmaintained vector for future exploits.

“The introduction of autonomous coding agents requires a fundamental shift in how we define ‘review.’ We are no longer just auditing code; we are auditing the decision-making logic of the AI itself.” — Senior Security Researcher, OWASP Foundation

This sentiment echoes the hiring trends we see across major tech firms. Recent job specifications for roles like Director of Security | Microsoft AI explicitly demand expertise in securing AI pipelines, not just traditional infrastructure. Visa is similarly seeking a Sr. Director, AI Security, signaling that payment processors view AI integration as a critical threat vector. If financial giants are fortifying their AI security leadership, enterprise developers should treat agentic coding tools as high-risk components.

The Compliance Gap

Regulatory frameworks like SOC 2 and ISO 27001 were written for human-led development lifecycles. They assume a human approves a commit. Agentic workflows blur this line. Who is responsible when the AI introduces a regression that leaks PII? The cybersecurity consulting firms sector is currently defining the criteria for provider selection in this space. Organizations need partners who understand the nuance of AI risk assessment versus standard vulnerability scanning.

Deployment realities dictate that you cannot simply enable these features in production environments without guardrails. The latency introduced by security scanning of AI-generated code must be factored into the CI/CD pipeline. If the scan takes longer than the code generation saves, the net efficiency gain is null. Data residency laws complicate cloud-based agentic tools. Sending proprietary logic to a public model endpoint may violate NDAs or GDPR constraints. Local inference on Apple Silicon helps, but model weights themselves develop into a security asset that needs protection.

Implementation Reality

Developers need to enforce strict environment variable handling when working with agentic tools. Never allow the agent to infer secrets from context. The following CLI command demonstrates how to seal environment variables before invoking a build process that might trigger agent behavior:

# Securely export variables without exposing them to subprocess history export SECRET_KEY=$(op read "op://vault/secret/credential") unset HISTORYFILE xcodebuild -scheme MyAgenticApp -destination 'platform=iOS Simulator,name=iPhone 16' This ensures that even if the agentic process attempts to log stdout, the credentials remain ephemeral. It’s a basic hygiene step, but one that automated systems often overlook in favor of convenience. The cybersecurity risk assessment and management services provider guide emphasizes structured professional sectors for qualified providers. This is where internal teams often lack the bandwidth to vet every AI suggestion.

The Talent Bottleneck

The industry is facing a shortage of professionals who understand both software architecture and adversarial AI. The job postings from Microsoft and Visa are not anomalies; they are leading indicators. Companies are realizing that securing AI requires a different skillset than securing networks. You need engineers who understand prompt injection, model inversion, and training data poisoning. Until this talent pool matures, outsourcing security validation to specialized managed service providers is the only viable path for most enterprises.

Apple’s move legitimizes agentic coding, but it doesn’t solve the governance problem. The technology ships, but the safeguards lag. Developers should treat this update as a beta feature for non-critical paths until the ecosystem matures. The trajectory is clear: AI will write more code, but humans must verify the intent. The cost of verification is the new bottleneck.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.