US Paves Way For Private Assets To Be Included In 401(k) Retirement Plans

The 401(k) Private Asset Rule: A Legacy System Nightmare in the Making

The Department of Labor’s new proposed rule to open 401(k) plans to private equity and crypto isn’t just a policy shift. it’s a massive distributed systems integration challenge waiting to explode. While the PR machine spins this as “democratization,” for the architects maintaining the ledger infrastructure of American retirement, this is a nightmare of latency, valuation oracles, and API incompatibility. We are effectively asking 1980s-era mainframe record-keepers to handshake with high-frequency, illiquid, and often opaque blockchain or private credit nodes without dropping a single packet of fiduciary data.

The Tech TL;DR:

- Latency & Liquidity Mismatch: Integrating T+0 crypto settlement with T+2 legacy payroll systems introduces significant synchronization risks and potential for “stale price” exploits.

- The Oracle Problem: Valuation of private assets lacks standardized API endpoints, forcing trustees to rely on manual inputs that break automated compliance checks.

- Safe Harbor Liability: The new “safe harbor” clause protects trustees only if they use specific, auditable data streams—essentially mandating SOC 2 Type II compliance for all asset providers.

The core friction here isn’t regulatory; it’s architectural. The current 401(k) ecosystem runs on a rigid, batch-processing architecture designed for liquid equities and bonds. Introducing private assets—which by definition lack a public market price and often have lock-up periods—requires a fundamental rewrite of the valuation engine. We aren’t just adding a new column to a SQL database; we are introducing non-deterministic variables into a system that demands absolute determinism.

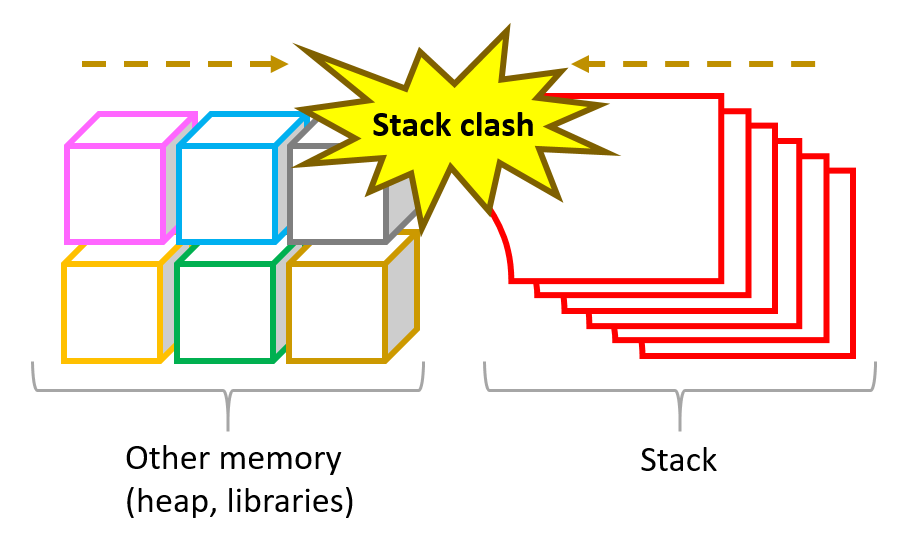

The Stack Clash: Legacy Record Keepers vs. Private Asset Nodes

To understand the deployment reality, we have to appear at the underlying tech stacks. Most major 401(k) providers (Fidelity, Vanguard, etc.) run on hardened, monolithic architectures optimized for high-volume, low-variance transactions. Private assets, particularly crypto and private credit funds, operate on decentralized or semi-decentralized stacks with variable throughput.

When a trustee attempts to ingest a private asset valuation, they are hitting an API wall. Public equities have standardized FIX protocol feeds. Private assets? They often rely on PDF reports or unstructured data dumps. This creates a massive bottleneck for fintech integration specialists who will be tasked with building the middleware to normalize this data.

Comparative Architecture: Liquid vs. Illiquid Asset Integration

| Feature | Traditional Equity Stack | Private Asset/Crypto Stack | Integration Risk |

|---|---|---|---|

| Valuation Source | Public Exchange (NYSE/NASDAQ) | Internal Fund Admin / Oracle | High (Single Point of Failure) |

| Settlement Time | T+1 / T+2 | Variable (Days to Months) | Critical (Cash Drag) |

| Data Format | Standardized FIX/JSON | Proprietary / Unstructured | High (Parsing Errors) |

| Audit Trail | Centralized Ledger | Distributed / Opaque | Medium (Compliance Gap) |

The DOL’s guidance explicitly mentions that trustees must “objectively, thoroughly, and analytically consider” factors like valuation and complexity. In technical terms, In other words the ingestion pipeline must be observable. You cannot have a “black box” asset in a retirement plan. This is where the industry will fracture. We will observe a rush toward cybersecurity auditors and compliance firms capable of certifying the data integrity of these private funds. If a private equity firm cannot expose a real-time, read-only API for their Net Asset Value (NAV), they will be locked out of the 401(k) market entirely.

The Implementation Mandate: Validating Asset Liquidity

For developers building the bridges between these worlds, the priority is verifying liquidity before execution. You cannot simply push a trade to a private fund API without checking the lock-up status. Below is a conceptual cURL request demonstrating how a compliant system might query an asset’s liquidity status before allowing a 401(k) allocation. Note the strict requirement for an audit_token—this represents the “safe harbor” proof the DOL is demanding.

curl -X GET "https://api.private-asset-provider.com/v1/asset/liquidity" -H "Authorization: Bearer $TRUSTEE_API_KEY" -H "Accept: application/json" -H "X-Compliance-Standard: DOL-2026-RULE" -d '{ "asset_id": "PE-FUND-8821", "check_type": "redemption_window", "required_lockup": false }' # Expected Response: 200 OK # { # "status": "LIQUID", # "next_valuation": "2026-04-15T09:00:00Z", # "audit_hash": "0x7f83b... (Immutable Proof)" # }This level of programmatic verification is non-negotiable. Manual spreadsheets are now a liability. The “safe harbor” protection mentioned in the Reuters report is essentially a legal wrapper around technical due diligence. If your system ingests bad data due to the fact that you didn’t validate the API response, you lose that protection.

The Oracle Problem and Valuation Latency

The most dangerous vector here is the “Oracle Problem.” In blockchain terms, this is the difficulty of getting off-chain data (like the value of a private real estate fund) onto an on-chain or digital ledger reliably. In the 401(k) context, if the valuation data is delayed or manipulated, the entire plan’s NAV is corrupted.

Skeptics in the industry, including several CTOs I spoke with off the record, warn that private credit funds are already showing strain. “We are seeing withdrawal waves in business development companies,” noted one senior infrastructure engineer at a major wealth management firm. “If you pipe that volatility directly into a retail retirement account without a circuit breaker in the code, you risk a cascade failure where users endeavor to exit illiquid positions simultaneously.”

“The DOL rule assumes data transparency that simply doesn’t exist in the private market stack. We are building bridges to islands that refuse to publish their maps.” — Senior Solutions Architect, Major Custodial Bank (Anonymous)

This is where the software development agencies specializing in financial middleware will make their fortune. The market needs a standardized protocol for private asset valuation—something akin to the ERC-20 standard but for compliance and liquidity reporting. Until then, every integration is a custom, fragile build.

Final Deployment Verdict

The Trump administration’s push to “democratize access” is technically feasible but operationally hazardous. We are moving from a walled garden of liquid assets to an open range of volatile, opaque instruments. For the CTOs and trustees reading this: do not treat this as a feature update. Treat it as a security patch for your entire fiduciary infrastructure. The “safe harbor” is only as strong as the API endpoints you trust.

If you are a plan administrator, your immediate sprint should not be marketing new investment options; it should be stress-testing your data ingestion pipelines against illiquid asset scenarios. The firms that survive this transition won’t be the ones with the best marketing; they will be the ones with the most robust cybersecurity and data governance frameworks in place.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.