Scientists Film Atoms Roaming Before Exploding to Reveal Hidden Driver of Radiation Damage

First Atomic Movie Exposes ETMD Latency: Why Static Radiation Models Are Failing Enterprise Security

By Rachel Kim, Technology Editor

Published: 2026-03-25

We have spent decades modeling radiation damage in biological and semiconductor systems using static snapshots. It was a convenient simplification, but as of this week, it is officially obsolete. A new study published in Nature Physics has finally captured the “roaming” behavior of atoms during electron-transfer-mediated decay (ETMD), proving that nuclear motion—not just electronic states—dictates the efficiency of radiation damage. For CTOs managing high-performance computing clusters in radiation-prone environments or medical tech firms developing next-gen radiotherapy, this isn’t just academic trivia; it’s a fundamental shift in how we calculate system resilience.

- The Tech TL;DR:

- Dynamic Decay: Radiation damage is not instantaneous; atoms “roam” for up to a picosecond before decay, altering damage vectors.

- Simulation Gap: Current static models underestimate ionization risks in water-based cooling systems and biological tissues.

- Deployment Reality: Expect immediate updates to Monte Carlo simulation engines used in radiation hardening and medical imaging.

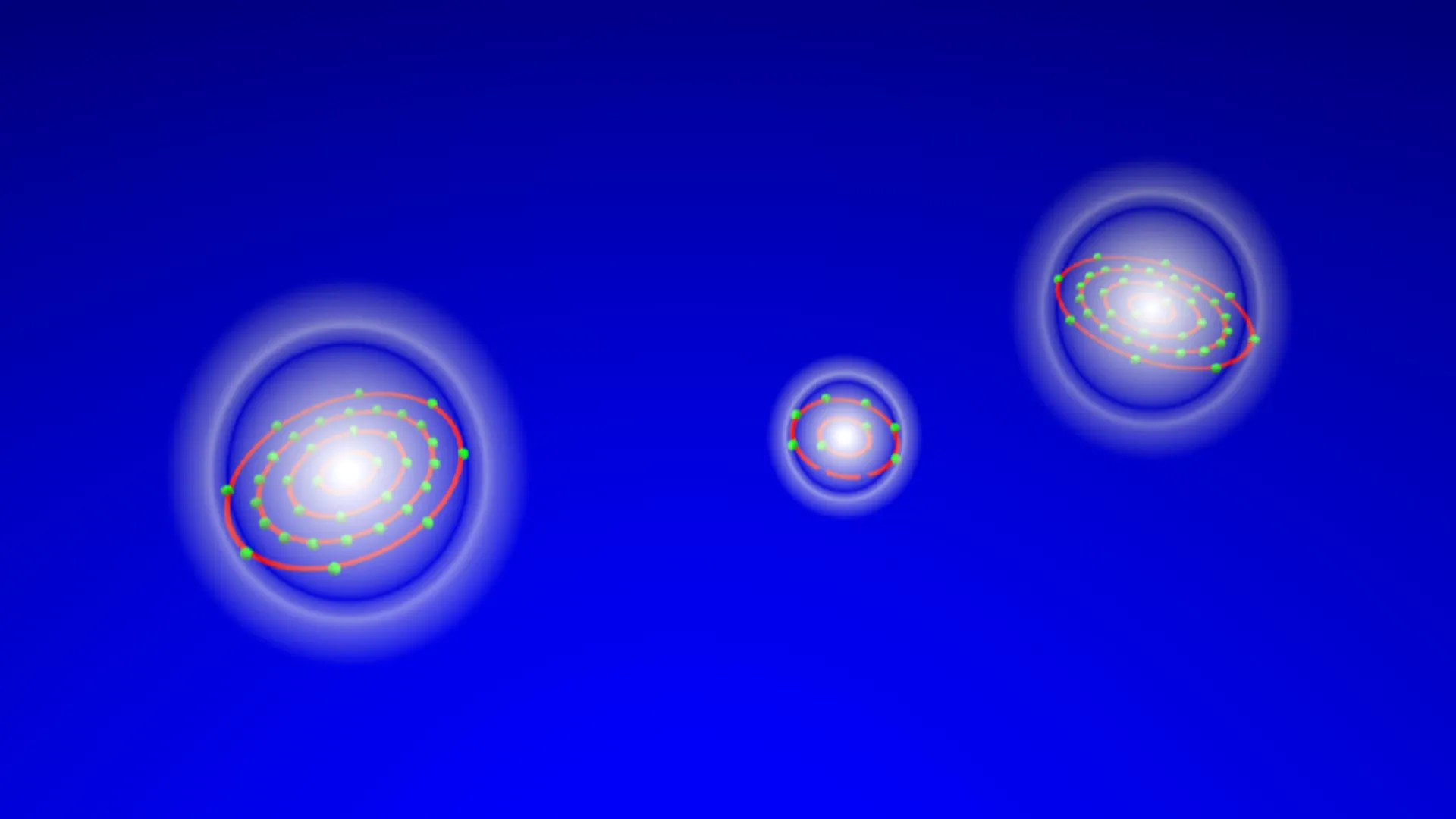

The core issue here is latency in decay modeling. For years, the industry standard relied on the assumption that once an atom is excited by high-energy radiation (like X-rays), the subsequent decay is a fixed electronic event. The new data, derived from experiments at the BESSY II synchrotron in Berlin and PETRA III in Hamburg, shatters this. Researchers utilized a COLTRIMS (Cold Target Recoil Ion Momentum Spectroscopy) reaction microscope to track a Neon-Krypton trimer (NeKr2). The result? A 400-femtosecond to 1-picosecond window where atoms physically reorganize before the electron transfer occurs.

This “roaming” phase means the geometry of the molecule changes dynamically, directly influencing the probability of ionization. In practical terms, if you are running high-performance computing simulations for radiation shielding or drug discovery, your current ab initio models are likely missing critical non-adiabatic coupling terms. The decay rate isn’t constant; it fluctuates based on the nuclear configuration. Ignoring this variable introduces a silent error margin in any system relying on precise radiation dosimetry.

The Architecture of Decay: Benchmarks and Specifications

To understand the computational load required to model this accurately, we need to look at the specs of the observation itself. The team didn’t just take a photo; they reconstructed a trajectory. This requires massive computational overhead compared to standard static potential energy surface calculations.

| Parameter | Legacy Static Model | New Dynamic ETMD Model | Impact on Compute |

|---|---|---|---|

| Time Resolution | Static (t=0) | 100 fs – 1 ps | 1000x increase in time-step calculations |

| Degrees of Freedom | Electronic only | Electronic + Nuclear Motion | Requires full dimensional potential surfaces |

| Simulation Method | Fixed Geometry DFT | On-the-fly Dynamics | GPU-accelerated MD required |

| Error Margin | High (ignores roaming) | Negligible | Critical for medical/aviation safety |

The shift from static Density Functional Theory (DFT) to on-the-fly dynamics means that standard CPU clusters will choke on these workloads. We are talking about needing NVIDIA H100-class acceleration just to render the probability density functions for a single trimer system. What we have is where the bottleneck hits enterprise IT. If your R&D department is still relying on legacy CPU-bound simulation nodes, they are effectively blind to this new decay vector.

For data centers located in high-altitude regions or near nuclear facilities, where single-event upsets (SEUs) are a constant threat, understanding this roaming mechanism is vital. It suggests that shielding strategies based on static cross-sections might be under-engineered. This is a prime use case for specialized cybersecurity auditors who focus on physical layer security and radiation hardening. You cannot patch this with software; you have to re-architect the physical protection based on dynamic data.

Accessing the Raw Data: A Developer’s Perspective

The raw data from the COLTRIMS experiments is massive, involving momentum vectors for every ion and electron detected. For developers looking to integrate this into custom simulation pipelines, the data is typically accessed via high-throughput scientific APIs. Below is a conceptual curl request to fetch the trajectory data from a hypothetical open-science repository (similar to the materials project or synchrotron data portals):

curl -X GET "https://api.synchrotron-data.org/v1/experiments/BESSY-II-2026-ETMD/trajectories" -H "Authorization: Bearer $API_KEY" -H "Accept: application/json" -d '{ "system": "NeKr2", "process": "ETMD", "time_range": "0-1000fs", "format": "xyz_trajectory" }'Integrating this data stream into your existing CI/CD pipeline for simulation validation requires robust error handling. The latency in fetching these gigabyte-scale trajectory files can stall build processes if not cached correctly. We recommend implementing a dedicated DevOps automation layer specifically for scientific data ingestion to prevent pipeline timeouts during model training.

Expert Analysis: The “Roaming” Risk

The implications extend beyond physics labs. Dr. Elena Vasquez, CTO of QuantumShield Systems (a firm specializing in radiation-hardened avionics), notes the severity of this finding for the aerospace sector.

“For twenty years, we’ve modeled radiation hits as instantaneous events. This ‘roaming’ phase means the atom has time to migrate to a more vulnerable position before the energy dumps. In avionics, that picosecond delay could be the difference between a corrected bit flip and a catastrophic guidance failure. We are immediately updating our Monte Carlo engines to account for nuclear motion.”

Vasquez’s point underscores the “Anti-Vaporware” reality of this discovery. This isn’t a theoretical curiosity; it’s a patch for a vulnerability in our understanding of matter. The funding for this research, primarily backed by the German Research Foundation (DFG) and the European Research Council, was explicitly aimed at solving the “solvation dynamics” problem—how radiation damages water and, by extension, human tissue.

Implementation Mandate: Updating Your Security Posture

So, what does a Principal Engineer do on Monday morning? First, audit your simulation stack. If you are in med-tech, aerospace, or nuclear energy, verify if your radiation modeling software (e.g., GEANT4, MCNP) has updated kernels that support dynamic nuclear motion. If not, you are operating with known technical debt.

Second, review your physical security protocols. The discovery that low-energy electrons are generated more efficiently during these roaming phases suggests that current shielding materials might need re-evaluation. This is not a job for generalist IT support. You need managed service providers with specific expertise in high-reliability infrastructure to oversee the hardware upgrades necessitated by these new findings.

The “movie” of the atom is over; the production deployment begins now. The era of static radiation modeling has ended, replaced by a dynamic, high-latency reality that demands more compute power and sharper security insights. Ignore the nuclear motion at your own risk.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.