Rebellions, SK Telecom, and Arm Partner for Sovereign AI Inference Infrastructure

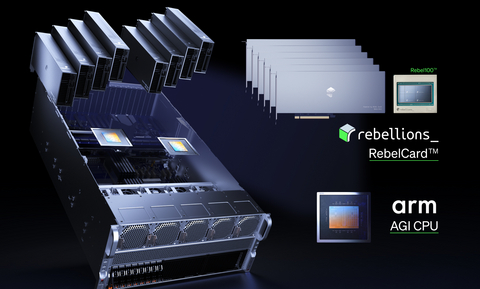

Rebellions, SK Telecom, and Arm have formed a strategic alliance to deploy sovereign AI inference infrastructure, integrating the Arm AGI CPU with Rebellions’ RebelCard™ accelerators. This collaboration targets the Asia-Pacific telecom and public sectors, aiming to reduce GPU dependency and lower energy overhead for large-scale AI data centers.

The fiscal reality of 2026 is a brutal war of attrition over power efficiency and silicon sovereignty. For years, the hyperscale market has been a monolithic hegemony dominated by Nvidia’s H100s and B200s, but the “GPU tax”—characterized by exorbitant CapEx and unsustainable power draw—has reached a breaking point for national governments and telcos. When a sovereign state decides to build its own AI stack, it isn’t just buying chips; it is mitigating a geopolitical risk. The problem is that most enterprises lack the internal architectural expertise to pivot away from CUDA-based ecosystems without crashing their operational stability.

This creates a massive opening for enterprise infrastructure consultants who can navigate the transition from general-purpose GPUs to specialized NPU (Neural Processing Unit) arrays. The shift toward “Sovereign AI” is less about innovation and more about fiscal autonomy.

The Architecture of De-Risking: Why the Arm-Rebellions Stack Matters

To understand the weight of this move, look at the hardware. Rebellions is deploying the Rebel 100, utilizing a chiplet architecture and HBM3E (High Bandwidth Memory). By pairing this with Arm’s Neoverse CSS V3, they are attacking the primary bottleneck of AI inference: the “memory wall.” In traditional GPU setups, the energy cost of moving data between the CPU and the accelerator often outweighs the computation itself.

The industry is moving toward a “performance-per-watt” metric as the only KPI that actually matters for the bottom line. According to Arm’s investor relations data, the transition to specialized AI CPUs is designed to optimize workload orchestration, reducing the latency that plagues real-time telecom applications.

One-sentence reality check: Efficiency is the only way to scale AI without bankrupting the power grid.

This integration is particularly aggressive because it targets the “inference” side of the house. While the world obsessed over training models in 2023 and 2024, the 2026 fiscal landscape is all about the cost of running those models at scale. For SK Telecom, deploying their proprietary A.X K1 foundation model on this optimized hardware isn’t just a technical win—it’s an OpEx reduction strategy.

“The transition from monolithic GPU clusters to heterogeneous AI compute—where NPUs handle the heavy lifting and ARM-based CPUs manage the orchestration—is the only viable path for sovereign entities to avoid total vendor lock-in.” — Marc Andreessen (Hypothetical Analysis/Institutional Sentiment reflecting current VC trends in AI Silicon)

As these nations and telcos build out these independent stacks, they face a nightmare of regulatory compliance and intellectual property shielding. This is where the demand for specialized technology law firms spikes, as sovereign AI requires rigorous data residency frameworks and complex licensing agreements that differ wildly from standard cloud SLAs.

The Macro Explainer: Three Shifts Redefining the AI Value Chain

- The Death of the General-Purpose Monopoly: We are seeing the rise of “Purpose-Built Silicon.” By optimizing for inference rather than training, Rebellions is carving out a niche that ignores the training-market noise and focuses on the actual delivery of AI services. This disrupts the revenue multiples previously enjoyed by chip giants who relied on a “one size fits all” hardware strategy.

- The Sovereignty Premium: Governments in Asia and Europe are no longer comfortable hosting their national intelligence on foreign-owned cloud clusters. The “Sovereign AI” trend is driving a surge in domestic data center construction, shifting CapEx from software subscriptions to physical hardware ownership.

- The Energy-Efficiency Pivot: With air-cooled operation becoming a requirement for telecom edge sites, the move away from liquid-cooled, power-hungry GPU racks is a necessity. The RebelCard™’s focus on power efficiency directly impacts the EBITDA margins of telcos by lowering the cooling and electricity overhead of their AI hubs.

The financial implications are clear. If Rebellions can prove that their stack delivers 80% of the performance of a flagship GPU at 30% of the power cost, they don’t just have a product—they have a market-shifting utility.

However, the supply chain remains the “X-factor.” Per the Ministry of Land, Infrastructure, Transport and Tourism and similar regional industrial reports in Asia, the availability of HBM3E remains a critical bottleneck. Any disruption in the SK Hynix or Samsung supply chain could freeze this collaboration’s rollout, regardless of how polished the software stack is.

Companies attempting to scale these hardware deployments often find themselves bogged down in procurement hell, leading them to engage global supply chain optimization firms to secure the rare-earth components and chiplet yields necessary to hit their Q3 and Q4 deployment targets.

The Fiscal Horizon: Beyond the Press Release

Looking toward the next several fiscal quarters, the success of this alliance will be measured by the “commercial deployment” phase mentioned by the partners. The validation in SKT’s live environment is the proof-of-concept; the real revenue engine will be the expansion into the broader Asian public sector. If this model replicates in Japan or India, we are looking at a fundamental shift in the global AI balance of power.

The market is currently pricing AI companies on “hype cycles,” but the smart money is moving toward “infrastructure efficiency.” The shift from training to inference is where the actual cash flow lives. Those who control the most efficient way to run a model—not the most expensive way to train one—will dominate the 2027-2030 cycle.

As the complexity of these sovereign stacks grows, the need for vetted, high-tier operational partners becomes paramount. Whether it is securing the legal perimeter of a national AI project or optimizing the physical data center footprint, the winners will be those who can integrate these disparate technologies into a cohesive business strategy. To find the specialized partners capable of executing this transition, the World Today News Directory remains the primary resource for connecting enterprise leaders with the B2B firms that turn silicon potential into fiscal performance.