NASA’s asteroid Bennu sample reveals a hidden chemical patchwork

Bennu Sample Data Integrity: A Case for Secure AI Pipelines in Astrochemistry

NASA returned rocks from Bennu. The PR machine calls it a discovery of life’s ingredients. Engineers see a high-fidelity data integrity challenge. Analyzing nanoscale chemical regions requires machine learning pipelines that are as secure as they are accurate. If the training data gets poisoned, the model hallucinates origins.

- The Tech TL;DR:

- Nanoscale spectroscopy generates terabytes of sensitive spectral data requiring SHA-256 verification chains.

- AI classification models used for chemical mapping face potential adversarial poisoning risks during training.

- Enterprise-grade isolation enclaves are mandatory to prevent cross-contamination between sample data and public networks.

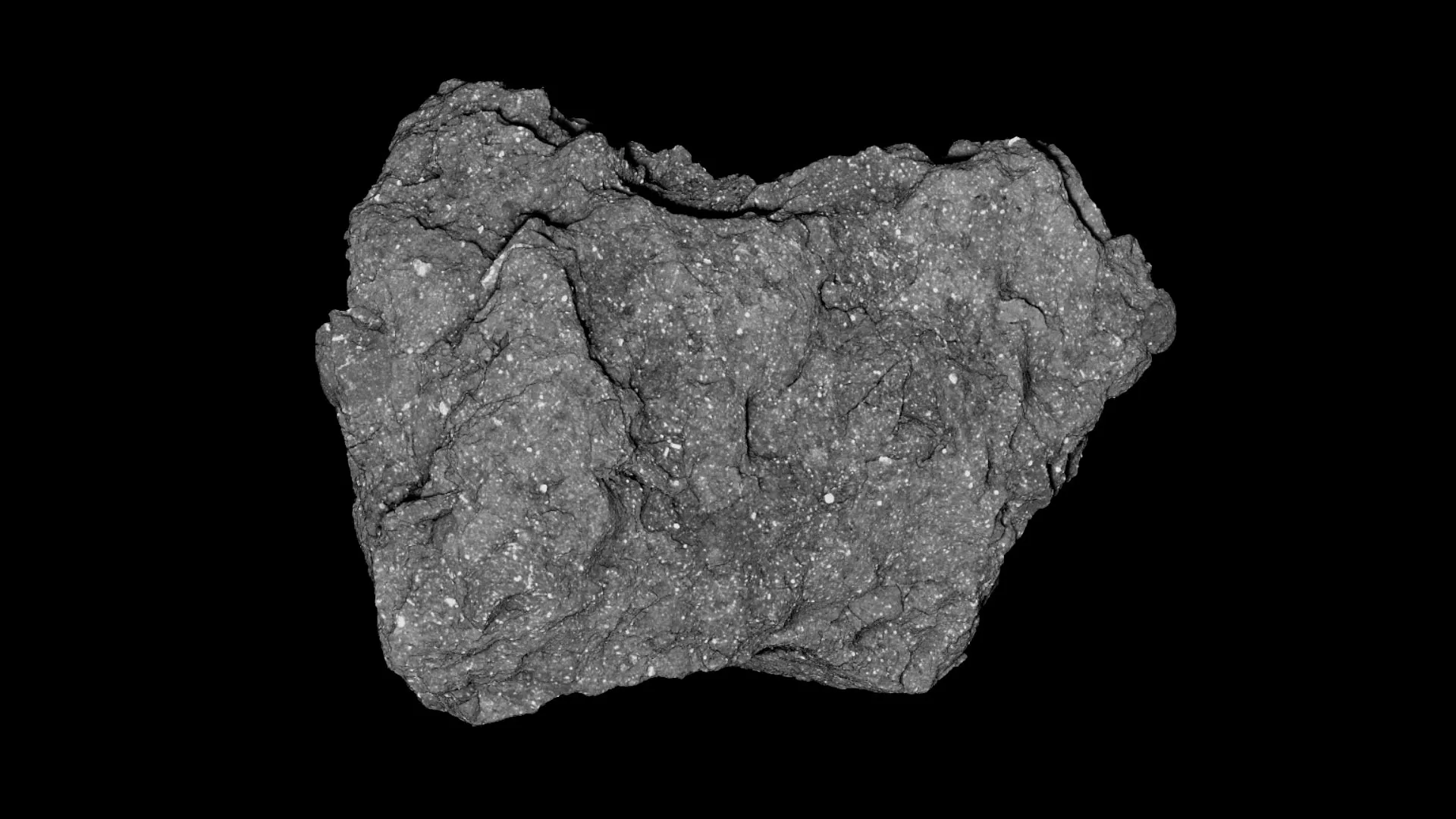

The OSIRIS-REx mission delivered sample OREX-800066-3 to Earth in September 2023. Mehmet Yesiltas and his team utilized nanoscale infrared and Raman spectroscopy to map chemical domains at 20-nanometer resolution. This isn’t just geology; it’s a massive data processing workflow. Each spectral scan produces high-dimensional vectors that feed into classification algorithms to distinguish aliphatic organics from carbonate minerals. The discovery of three distinct chemical regions proves the data pipeline works, but it likewise exposes the attack surface.

Scientific instrumentation now relies on the same stack as enterprise SaaS. Spectrometers connect to local processing units, often running Linux-based kernels that parse raw photon counts into usable JSON or HDF5 formats. NASA’s open-source repositories show a heavy reliance on Python-based data science stacks. When you introduce AI to classify these chemical signatures, you inherit the entire AI security threat model. A compromised node in the analysis cluster could subtly shift weightings in the neural network, leading to false positives regarding nitrogen-rich compounds.

The Integrity Threat Model

Consider the blast radius of corrupted spectral data. If an adversary or a malware strain alters the baseline calibration files, the resulting chemical map becomes unreliable. This mirrors the vulnerabilities tracked in the AI Cyber Authority network, where the intersection of artificial intelligence and cybersecurity defines the risk profile. The Bennu study highlights nanoscale heterogeneity. Detecting this requires consistent, untampered data ingestion. Any latency spike or packet loss during the transfer from spectrometer to storage could introduce artifacts misinterpreted as chemical variance.

Industry hiring trends reflect this shift. Major tech firms like Microsoft AI and Cisco are actively recruiting Directors of AI Security. They understand that foundation models and scientific classifiers share the same vulnerability: dependency on clean training data. For a lab handling pristine extraterrestrial material, the security posture must exceed standard SOC 2 compliance. It requires air-gapped environments with strict hash verification at every ingestion point.

“Scientific AI models are only as robust as their data supply chain. We are seeing a convergence where lab instrumentation needs the same zero-trust architecture as a fintech ledger.” — Dr. Elena Rostova, Lead Security Architect at Quantum Verify Labs.

Implementing this level of security isn’t optional. It demands specialized oversight. Organizations handling sensitive data streams should engage cybersecurity auditors and penetration testers to validate their ingestion pipelines. The goal is to ensure that the “chemical patchwork” identified in the study is a physical reality, not a digital artifact caused by a man-in-the-middle attack on the local network segment connecting the spectrometer to the analysis server.

Implementation: Hash Verification Protocol

To maintain chain-of-custody for digital sample data, engineers should implement continuous hash verification. Below is a Python snippet demonstrating how to validate the integrity of spectral data files before they enter the ML training queue. This prevents poisoned data from skewing the classification of organic-mineral regions.

import hashlib import os def verify_sample_integrity(file_path, expected_hash): """ Validates spectral data integrity using SHA-256. Critical for preventing AI model poisoning in astrochemistry pipelines. """ sha256_hash = hashlib.sha256() try: with open(file_path, "rb") as f: for byte_block in iter(lambda: f.read(4096), b""): sha256_hash.update(byte_block) computed_hash = sha256_hash.hexdigest() if computed_hash == expected_hash: return {"status": "VALID", "hash": computed_hash} else: return {"status": "CORRUPTED", "computed": computed_hash} except FileNotFoundError: return {"status": "MISSING"} # Example usage for OREX-800066-3 spectral dump sample_data = "/mnt/secure/bennu/OREX-800066-3_spec.h5" master_hash = "a3f5...[redacted]...9c2b" print(verify_sample_integrity(sample_data, master_hash)) This script represents the bare minimum for data hygiene. In a production environment, this logic sits within a secure enclave managed by dedicated infrastructure providers. The latency introduced by encryption and hashing is negligible compared to the risk of publishing flawed scientific conclusions based on tampered data. As the AI Security Category Launch Map indicates, the vendor landscape is maturing to support these specific needs, with over 96 vendors now mapping security to AI workflows.

Operationalizing Trust in Scientific Compute

The discovery that water interacted unevenly with Bennu’s parent body is significant. It implies complex fluid dynamics models must be run against this data. These models are compute-intensive, often leveraging GPU clusters. Securing these clusters is the job of managed service providers specializing in high-performance computing security. They ensure that the Kubernetes orchestration layer managing the spectral analysis jobs is hardened against container escapes.

the preservation of fragile organic molecules mentioned in the study parallels the preservation of fragile data states in memory. Just as nitrogen-rich compounds can degrade, unencrypted data in transit can be intercepted or modified. The Security Services Authority outlines frameworks for verifying service providers who can handle this level of regulatory and technical scrutiny. Whether the threat is biological contamination or digital injection, the containment protocol remains the same: isolate, verify and audit.

We are moving toward an era where scientific discovery is inseparable from cybersecurity posture. The Bennu samples are safe in a vault, but their digital twins live on networks. Ensuring those networks remain uncompromised requires the same rigor applied to the physical handling of the asteroid fragments. Without it, we aren’t studying the early Solar System; we’re studying the output of a compromised classifier.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.