jai: Lightweight Sandbox for Safe AI Agents

Sandboxing the AI Agent: Why jai is the New sudo for Local LLMs

The era of the “trust but verify” workflow is dead. In 2026, giving an AI agent access to your local filesystem without a containment strategy is architectural negligence. We are seeing a surge in “agent-induced incidents”—scripts generated by LLMs that recursively delete working trees or exfiltrate .ssh keys because the model hallucinated a file path. Enter jai, a lightweight sandboxing utility from the Stanford Secure Computer Systems (SCS) group that attempts to solve the friction between security and usability.

The Tech TL;DR:

- Zero-Overhead Containment:

jaiutilizes Linux namespaces and overlayfs to isolate processes without the heavy startup latency of Docker containers or VMs. - Copy-on-Write Protection: Your home directory remains read-only by default; changes are captured in a temporary overlay, preventing permanent data corruption.

- Three Security Tiers: Modes range from “Casual” (integrity focus) to “Strict” (UID separation), acknowledging that not every task requires military-grade isolation.

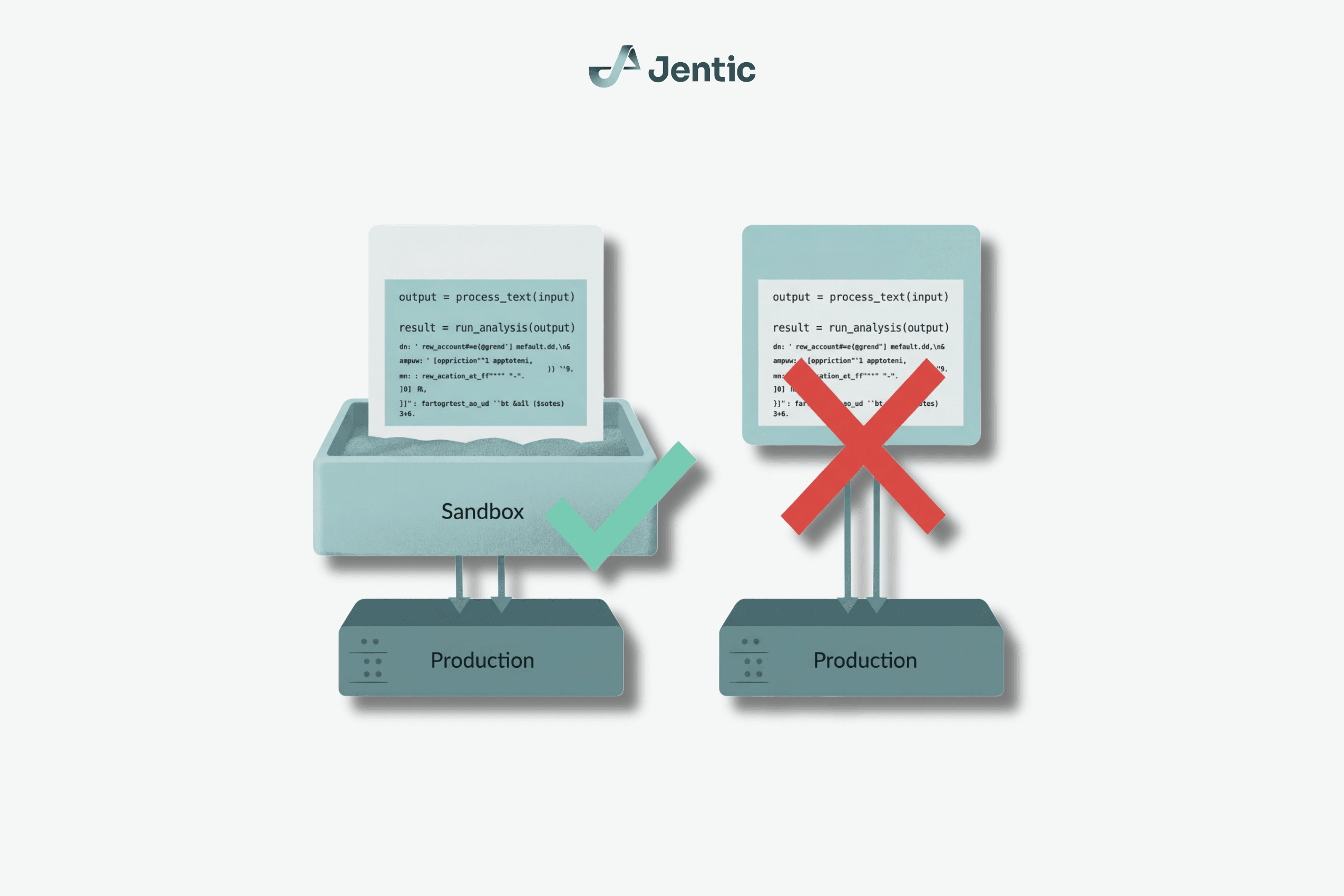

The core problem jai addresses is the “privilege escalation” inherent in modern agentic workflows. When you pipe a shell command into an LLM, you are effectively granting it root-level trust over your user context. Traditional containerization via Docker is often overkill for a quick one-off script; the overhead of building an image and managing volumes introduces latency that kills the “flow state” of development. jai strips this down to the kernel primitives. It doesn’t build an image; it builds a wall.

The Architecture of “Casual” Safety

From a systems engineering perspective, jai is fascinating because it leans heavily on the Linux kernel’s existing namespace capabilities rather than introducing a new hypervisor layer. It operates similarly to bubblewrap but abstracts the complex flag management into a single binary. The “Casual” mode, which is the default, mounts your current working directory (CWD) as writable whereas placing the rest of your home directory behind a copy-on-write (CoW) overlay.

This means if an AI agent decides to rm -rf ~, This proves actually deleting files in a transient overlay filesystem. Once the session ends, that overlay is discarded, and your actual home directory remains pristine. This is a critical distinction for enterprise developers who need to test untrusted code without spinning up a full ephemeral environment.

“The gap between YOLO execution and full containerization is where most security failures happen.

jaicloses that gap by making the secure path the path of least resistance.”

However, skepticism is warranted. The documentation explicitly states that “Casual mode does not protect confidentiality.” This is a vital distinction for CTOs evaluating this for production environments. While integrity is preserved (files aren’t deleted), an agent running in Casual mode can still read your .bashrc or other readable files in your home directory. For high-security contexts, the “Strict” mode is required, which runs the process under an unprivileged jai user with a completely empty private home.

Comparative Analysis: jai vs. The incumbents

To understand where jai fits in the stack, we have to look at the alternatives. Docker is the industry standard for reproducibility, but it is heavy. bubblewrap is powerful but verbose. chroot is fundamentally insecure for sandboxing as it lacks namespace isolation.

| Feature | jai (Casual) |

Docker | bubblewrap |

|---|---|---|---|

| Startup Latency | ~10ms (Binary exec) | ~1-2s (Daemon + Image load) | ~10ms (Binary exec) |

| Home Dir Access | Read-only (CoW Overlay) | Requires Volume Mount | Manual Bind Mount |

| Configuration | Zero-config | Dockerfile / Compose | Complex CLI Flags |

| Leverage Case | Ad-hoc AI Agents | Microservices / CI | Custom Sandboxing |

The latency metric is the killer feature here. In a continuous integration pipeline or a rapid prototyping session, the 1-2 second delay of spinning up a Docker container adds up. jai executes almost instantly because it leverages the host kernel directly without a daemon. This makes it viable for wrapping every single AI interaction, a practice that security auditors are beginning to recommend.

Implementation and Enterprise Triage

For development teams looking to integrate this immediately, the implementation is trivial. There is no need for a Dockerfile. You simply prefix your command. For example, if you are using a local coding agent like claude-code or a custom Python script:

# Standard risky execution $ claude-code "Refactor the auth module" # Secure execution with jai $ jai claude-code "Refactor the auth module"This simple prefix changes the security posture of the entire session. However, for larger organizations, relying on individual developers to remember to type jai is a fragile strategy. This is where the role of cybersecurity auditors and managed service providers becomes critical. Enterprises should be looking to enforce this at the policy level, potentially wrapping the jai binary in a wrapper script that is aliased to common AI tools in the corporate shell environment.

as AI agents initiate to interact with more sensitive infrastructure, the “Strict” mode of jai should be mandatory. This mode isolates the process UID, ensuring that even if the agent escapes the filesystem jail, it cannot access files owned by the real user. Organizations with strict SOC 2 compliance requirements should consult with compliance specialists to determine if jai‘s namespace isolation meets their specific regulatory thresholds for data segregation.

The Verdict: A Necessary Stopgap

jai is not a silver bullet. The developers at Stanford SCS are clear: this is not a hardened container runtime designed to defend against a determined adversary with root access. It is a “blast radius reducer.” It stops the accidental rm -rf and the hallucinated config overwrite. It does not stop a sophisticated supply chain attack that targets the kernel itself.

However, in the current landscape of 2026, where AI agents are becoming standard members of the dev team, jai represents a necessary evolution in local security hygiene. It acknowledges that developers will not stop using powerful tools just because they are dangerous; instead, we must build the safe way to use them the easiest way. As the ecosystem matures, we expect to see jai-like primitives baked directly into IDEs and shell environments, making sandboxing invisible and mandatory.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.