Google Unusual Traffic Detected Network Security Verification

The global journalism landscape is undergoing a seismic shift toward the “Newsroom Model,” where specialized AI agents manage diverse content streams, yet this automation faces immediate friction from robust digital verification systems like CAPTCHA protocols. As news organizations from The Times of India to Western outlets deploy real-time personalization engines, they encounter increased security barriers designed to distinguish human editors from automated scripts. This technological tension highlights a critical need for verified cybersecurity infrastructure and specialized media law counsel to navigate the evolving boundaries of automated reporting.

We are witnessing the industrialization of the editorial process. It is no longer theoretical. The “Newsroom Model” is here, deploying specialized AI agents to build diverse teams that operate at speeds human editors cannot match. But there is a cost. When these automated systems attempt to aggregate, verify, or distribute news, they trigger the very defenses designed to protect the open web.

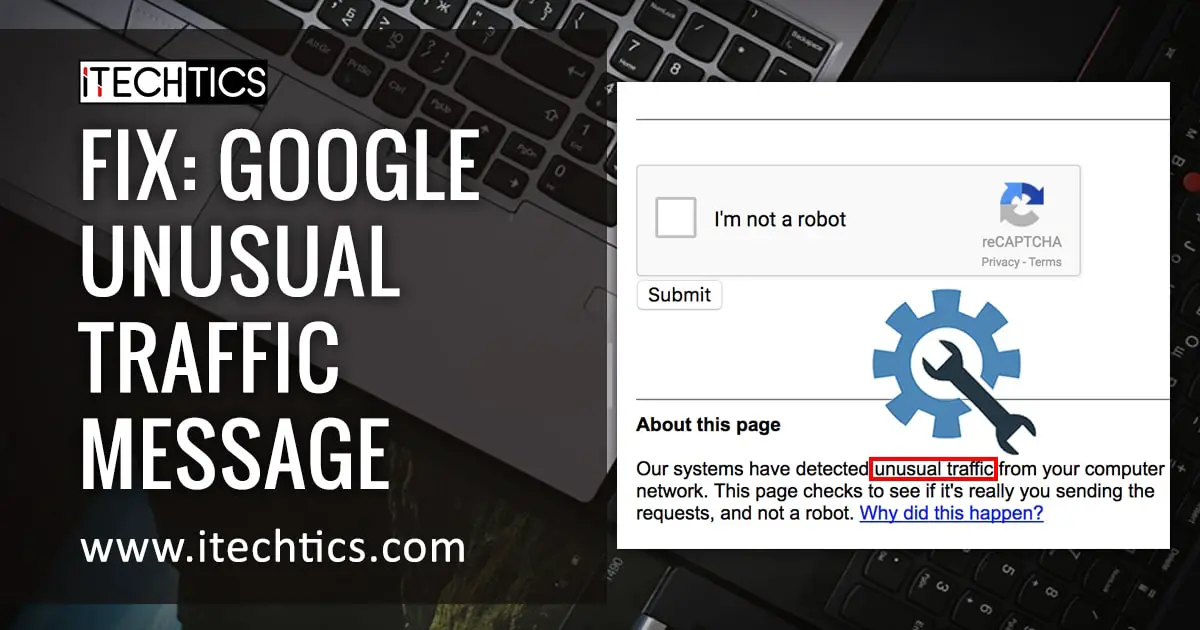

The error message is familiar to many, but it is symbolic. “Our systems have detected unusual traffic… Not a robot.” This is not just a technical glitch; it is the frontline of a new digital border war. As we move deeper into 2026, the line between a helpful news aggregator and a malicious bot has blurred, creating a complex problem for media houses relying on the Newsroom Model of specialized AI agents.

The Friction of Automation in Real-Time Reporting

Consider the scale. Major outlets are now attempting to bring real-time personalization to over 1,500 daily news stories. This volume requires automation. However, when an AI agent scrapes data to populate a personalized digest, it often mimics the behavior of a scraper, tripping security alarms. This creates a bottleneck. The very tools designed to efficiency are being throttled by the security protocols of the sources they rely on.

This is not merely an IT inconvenience. It is a business continuity risk. If your primary method of content ingestion is flagged as “unusual traffic,” your news flow stops. The problem extends beyond simple access; it touches on the integrity of the data pipeline. When a system blocks an IP address—like the 2403:6b80 series often associated with large data centers—it disrupts the supply chain of information.

The industry is responding by doubling down on metadata and classification. The Associated Press, for instance, has standardized AP classification metadata to ensure that automated systems understand the context of Subject, Geography, and Person. This structured data helps legitimate agents identify themselves, but it requires a level of technical sophistication that many smaller newsrooms lack.

“The challenge isn’t just generating the story; it’s proving the story was gathered ethically and technically without triggering the defenses of the source infrastructure. We are building cars that drive themselves, but the roads are still guarded by toll booths that only accept human hands.”

This sentiment echoes the findings from recent industry toolkits, including the Beyond Print Toolkit on audience personas. The data suggests that while audiences crave personalization, the backend machinery required to deliver it is fragile. When that machinery breaks, the trust of the audience breaks with it.

Legal and Infrastructure Implications

The ramifications of these blocks are legal as much as they are technical. When an automated agent is blocked for “violating Terms of Service,” it raises questions about liability. Is the news organization responsible for the actions of its AI agent? In jurisdictions with strict data sovereignty laws, the answer is increasingly “yes.”

Media organizations are now finding themselves in a position where they must audit their own algorithms. The risk of being labeled a “bad actor” by search engines or content providers can lead to de-indexing or permanent bans. This is where the problem meets the solution. The modern newsroom cannot just hire journalists; it must hire digital compliance officers.

For news directors facing these verification walls, the immediate solution often lies in external expertise. Navigating the penalties of a Terms of Service violation is a logistical minefield. Developers and editors are increasingly consulting top-tier media law and intellectual property attorneys to shield their assets and define the boundaries of their automated scraping policies.

Securing the Supply Chain of Information

The “Information Gap” here is clear: We have the technology to generate news, but we lack the infrastructure to deliver it without friction. The solution requires a hybrid approach. Human oversight must remain in the loop, not just for editorial judgment, but for technical verification.

the infrastructure supporting these AI agents must be robust. Relying on shared IP addresses or public proxies is a recipe for disaster, as seen in the error logs citing shared network connections. News organizations need dedicated, verified channels. This has spurred a demand for specialized enterprise cybersecurity and network verification firms that can whitelist legitimate news-gathering traffic.

There is also a growing market for data ethics and compliance consultants. These professionals support newsrooms build “personalized AI news digests that filter bias” without crossing the line into invasive data harvesting that triggers security blocks. They ensure that the personalization engine respects the Terms of Service of the platforms it interacts with.

The Path Forward: Verification as a Service

We are moving toward a future where “Verification as a Service” becomes a standard line item in a newsroom budget. The ability to prove that your traffic is human, or at least authorized, will be as valuable as the story itself. The Times of India’s success with 1,500 daily stories proves the demand is there, but the technical debt is accumulating.

The “Newsroom Model” will survive, but it will evolve. It will become less about raw automation and more about authenticated automation. The agents of the future will carry digital credentials, much like a press pass, to bypass the CAPTCHAs of the world.

Until then, the friction remains. For those managing global directories and news feeds, the lesson is stark. Automation offers scale, but verification offers survival. As we integrate these powerful tools, we must ensure we have the professional support to keep the lines of communication open. The story is only valuable if it can be delivered.

In this new era, your most important hire might not be a writer. It might be the network security specialist who ensures your voice is never silenced by a robot check.