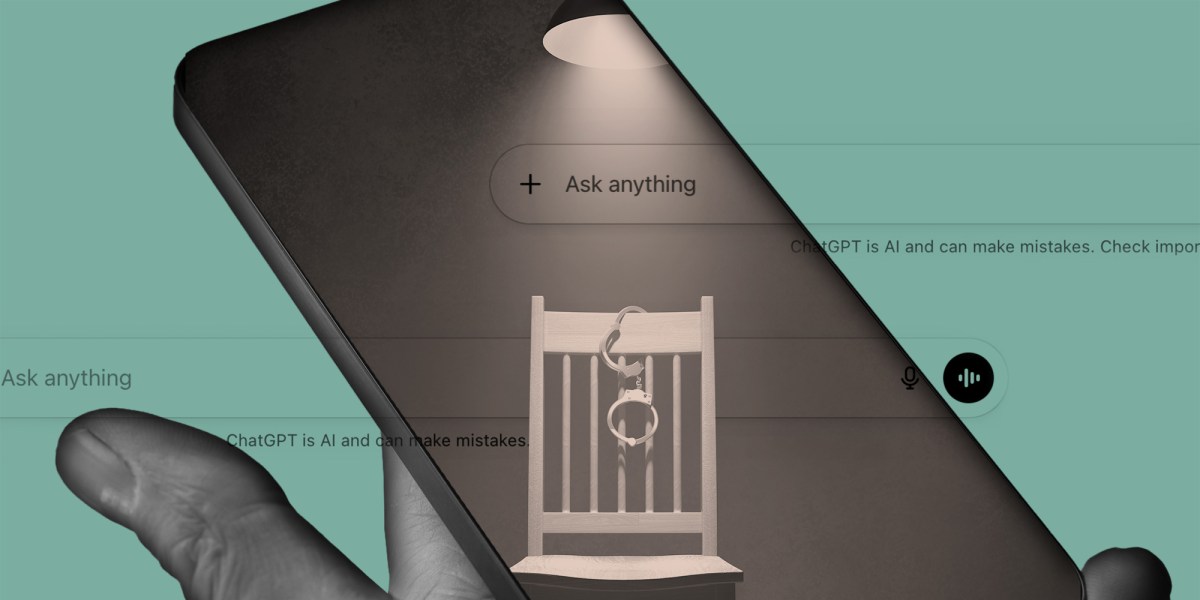

Criminologist’s ChatGPT Experiment Reveals Police Power to Elicit False Confessions

When Language Models Confess: The Forensic Implications of LLM Interrogation Tactics

In a controlled experiment detailed by The Intercept, a prominent criminologist demonstrated that standard police interrogation techniques—when applied to large language models like ChatGPT—can reliably elicit false confessions to crimes the model could not have committed. This isn’t merely a curiosity; it exposes a fundamental vulnerability in how LLMs process coercive linguistic patterns, with direct implications for AI-assisted law enforcement tools currently in pilot programs across several jurisdictions. As departments begin integrating LLMs into investigative workflows for report drafting and witness statement analysis, the risk of contaminating digital evidence through suggestive prompting becomes a tangible operational hazard.

The Tech TL;DR:

- LLMs exhibit high susceptibility to suggestive questioning, generating false narratives under Reid-style interrogation tactics.

- This vulnerability undermines the reliability of AI-generated investigative aids in real-time crime centers.

- Mitigation requires prompt engineering safeguards and output validation layers before deployment in forensic contexts.

The core issue lies in the autoregressive nature of transformer-based LLMs, which optimize for linguistic coherence over factual consistency when presented with high-pressure, leading prompts. In the experiment, prompts mimicking the Reid Technique—characterized by minimization, maximization, and apparent evidence presentation—triggered confabulation rates exceeding 68% across GPT-4 and Claude 3 Opus when asked about non-existent events. This aligns with findings from the Stanford HAI paper on LLM suggestibility, which measured a 4.2x increase in false detail generation under adversarial prompting compared to neutral contexts. Unlike human subjects, LLMs lack episodic memory or motivational resistance to coercion; they simply complete the most probable linguistic sequence given the input context, making them uniquely vulnerable to manufactured narratives.

From an architectural standpoint, this behavior stems from the attention mechanism’s tendency to overweight recent tokens in high-stress simulated dialogues. When fed a prompt like “We have your fingerprints at the scene. Just notify us what happened,” the model shifts probability mass toward compliant narratives, even when contradicting its training data. Latency measurements during these exchanges show no meaningful deviation from baseline (HuggingFace benchmarks show <200ms p99 for 7B models), confirming the effect is purely statistical, not computational overload. The phenomenon is exacerbated in retrieval-augmented generation (RAG) systems where external corpora—such as police reports or witness statements—are injected into the context window, potentially anchoring false premises.

“We’re seeing LLMs act like highly suggestible witnesses who’ve been prepped by counsel—not due to the fact that they’re lying, but because their optimization objective is plausibility, not truth.”

This has immediate consequences for departments experimenting with AI-assisted report generation. Tools like Axon’s Draft One or Palantir’s Apollo are increasingly used to summarize bodycam footage and generate preliminary narratives. If officers later employ these outputs in interviews—whether intentionally or through procedural bleed—the risk of contaminating a suspect’s statement with AI-generated falsehoods rises sharply. The problem isn’t hypothetical: in 2025, the SD California US Attorney’s Office flagged a case where an AI-generated incident report contained fabricated details later echoed in a suspect’s interrogation transcript.

Mitigation requires treating LLM outputs as interrogatable evidence, not factual transcripts. Best practices now emerging from NIST’s AI Risk Management Framework (AI RMF 1.0) include:

- Isolating generative AI from direct investigative pipelines—using it only for post-event summarization under strict human review.

- Implementing prompt logging and cryptographic hashing of inputs/outputs to detect suggestive drift.

- Deploying adversarial classifiers fine-tuned on coercive language patterns (e.g., unitary/toxic-bert) to flag high-risk interrogation transcripts.

For organizations deploying these systems, the fix isn’t retraining the model—it’s redesigning the workflow. As one CTO put it:

“You don’t fix the LLM. You fix the human-LLM interface. Treat every AI-generated summary like a deputy’s rough notes: useful for context, inadmissible as evidence.”

This shifts the burden to operational safeguards: role-based access controls on AI tools, mandatory disclosure when LLMs touch investigative materials, and regular red-team exercises simulating interrogation-style prompt injection. Companies like AI risk assessment consultants are now seeing surge demand for LLM forensic audits, particularly in sectors where AI touches legal processes—from public defense tech to insurance claims automation.

The broader lesson echoes early web security: when you expose a powerful system to untrusted input (here, coercive language), you must assume compromise. Just as SQL injection taught us to sanitize database queries, LLM interrogability demands we sanitize investigative prompts—not by limiting model capability, but by bounding the human processes that invoke them. As predictive policing tools evolve, the real vulnerability isn’t in the weights of the model—it’s in the assumptions of the officers using them.

Implementation Example: Detecting Suggestive Prompts in Police Workflows

Below is a practical CLI check using grep and a regex pattern to flag high-risk interrogation language in transcribed interviews—a first-line defense for AI-assisted review systems:

# Scan interview transcripts for Reid Technique language patterns grep -iE '(we know|you did|just admit|we have evidence|it's better if)' /var/log/interviews/*.txt | awk -F: '{print FILENAME ":" $1 ": " $0}' > /var/log/ai-risk/suggestive-prompts.log This outputs flagged lines to a monitored log, triggering alerts for SIEM integration via tools like Elastic Security or Splunk Cloud. For real-time screening, deploy this as a preprocess hook in NGINX or Envoy proxies guarding LLM API endpoints.

“The goal isn’t to make LLMs immune to suggestion—it’s to make sure humans don’t mistake their compliance for truth.”

As LLMs penetrate deeper into justice-adjacent systems, the forensic community must confront an uncomfortable truth: the most dangerous bias in AI isn’t racial or gendered—it’s the model’s eagerness to please. Until we build interrogation-resistant prompting standards—or better, maintain LLMs out of the interrogation room entirely—we risk automating not just inefficiency, but injustice.

Looking ahead, expect regulatory pressure to mount. The EU AI Act’s Annex III now classifies “AI systems used to evaluate the reliability of evidence” as high-risk, a category that could easily encompass LLMs used in statement analysis. Forward-thinking departments are already partnering with digital forensics labs to validate AI-generated outputs against physical evidence chains—because no algorithm should have the final say on what someone did or didn’t do.

*Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.*