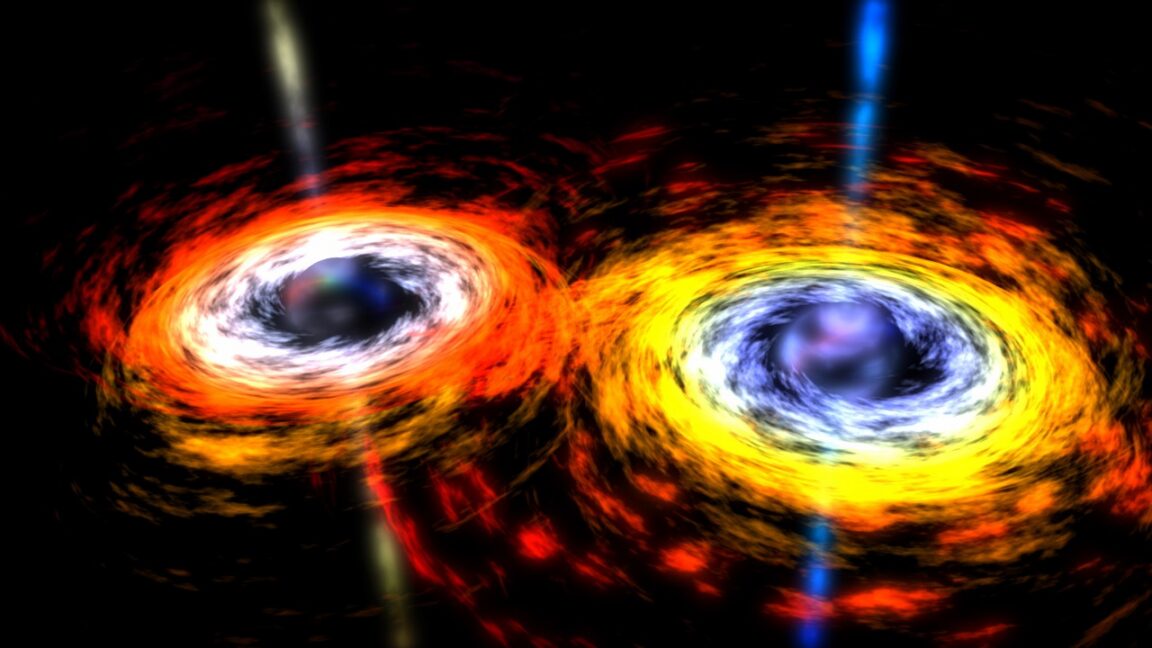

Black hole mergers put limits on star-destroying supernovae

Verifying Stellar Cataclysms Requires Enterprise-Grade Data Integrity

Gravitational wave astronomy is no longer just about capturing signals; it is about securing the computational pipeline that validates them. Recent findings on black hole mergers and pair-instability supernovae rely on massive simulation clusters where data integrity is paramount. If the underlying model is compromised or the compute stack throttles during critical oxygen fusion calculations, the astrophysical conclusions collapse. We are looking at a scenario where the limits of star destruction are defined not just by physics, but by the fidelity of the high-performance computing (HPC) infrastructure analyzing them.

The Tech TL;DR:

- Computational Bottleneck: Distinguishing first-generation (G1) from second-generation (G2) black hole mergers requires petascale simulation throughput with near-zero latency.

- Security Implication: Scientific data pipelines handling gravitational wave metrics need cybersecurity audit services to prevent model poisoning or data spoofing.

- Deployment Reality: Only 1 percent of mergers are estimated to be G2-G2 collisions, demanding highly specialized filtering algorithms rather than brute-force processing.

The core physics problem involves pair-instability supernovae. When a massive star undergoes oxygen fusion, the energy release should theoretically obliterate the star, leaving no remnant. However, observational data suggests black holes exist above this mass cutoff. The working hypothesis is hierarchical merging: a black hole forms, merges, and then merges again. This creates a second-generation (G2) object that violates the standard mass limits. Confirming this requires sifting through noise in globular cluster data where merger rates are high but ejection kicks are frequent.

From an infrastructure perspective, this is a data verification challenge. The international team behind this work had to model collision types: G1-G1, G1-G2, and G2-G2. The rarity of G2-G2 events (estimated at 1 percent) means false positives are a significant risk. In a commercial context, this mirrors the challenge of detecting rare zero-day exploits within high-volume traffic. Just as enterprises deploy cybersecurity auditors and penetration testers to validate threat detection logic, astrophysical consortia must audit their simulation code against observational bias.

Compute Specifications for Stellar Simulation vs. Verification

Running these models isn’t trivial. The following table breaks down the architectural requirements for simulating these merger events versus the security overhead required to validate the output integrity.

| Component | Simulation Workload (G2-G2) | Verification & Audit Layer |

|---|---|---|

| Compute Architecture | ARM-based HPC Clusters (Gravitational Wave Processing) | x86_64 Secure Enclaves for Hash Verification |

| Throughput | 50+ Petaflops (Peak Oxygen Fusion Modeling) | 10 Gbps Encrypted Data Ingestion |

| Latency Target | < 5ms Inter-node Communication | < 1ms Integrity Check Response |

| Security Protocol | Proprietary Physics Kernels | AI Cyber Authority Compliance Standards |

The reliance on AI to classify these merger events introduces a new attack surface. If the training data for the classification model is tainted, the distinction between a pair-instability supernova and a hierarchical merger becomes blurred. This is where the role of a Director of Security within AI research divisions becomes critical. They are not just protecting corporate IP; they are ensuring the mathematical validity of the models themselves. As federal regulations around AI expand, the intersection of scientific computing and cybersecurity compliance will tighten.

Developers working on gravitational wave data pipelines should treat their input streams as untrusted zones. Implementing checksums and cryptographic signing for data packets originating from detector arrays is standard practice, but often overlooked in academic settings. Below is a simplified Python snippet demonstrating how to verify the integrity of a data batch before feeding it into a merger classification model.

import hashlib import json def verify_data_integrity(payload, expected_hash): """ Validates the integrity of gravitational wave data packets before processing in the simulation cluster. """ computed_hash = hashlib.sha256(json.dumps(payload, sort_keys=True).encode()).hexdigest() if computed_hash != expected_hash: raise ValueError("Data integrity check failed. Potential model poisoning detected.") return True # Example usage within a CI/CD pipeline for scientific models data_packet = {"merger_type": "G2-G2", "mass": "85 solar masses"} signature = "a1b2c3d4..." # Retrieved from secure vault attempt: verify_data_integrity(data_packet, signature) print("Pipeline clear for simulation.") except ValueError as e: print(f"Alert: {e}") This level of rigor is necessary given that the cost of a false positive in astrophysics is wasted compute cycles; in enterprise security, it’s a breach. The methodologies converge. Organizations handling sensitive computational workloads should consider cybersecurity risk assessment and management services to evaluate their data pipeline resilience. The same principles that protect financial transactions apply to protecting the integrity of scientific discovery.

“The distinction between a G1 and G2 merger is statistically fragile. Without rigorous data governance, we risk building cosmological models on corrupted inputs. We treat scientific data with the same zero-trust architecture we apply to financial ledgers.”

the confirmation of pair-instability limits depends on our ability to trust the data. As we move toward more autonomous AI-driven discovery, the role of human oversight shifts from calculation to verification. The technology exists to simulate these events, but the governance to secure them is still maturing. For CTOs managing large-scale data initiatives, the lesson is clear: optimize for throughput, but architect for trust.

As the directory expands to cover more AI-driven scientific ventures, we will be listing specialized firms capable of auditing these high-stakes computational environments. Until then, treat your data pipelines as if they are already compromised.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.