Acumera Acquires Scale Computing to Boost Cloud Infrastructure

The 2026 CRN AI 100 list isn’t just another industry popularity contest. it’s a map of where the compute is actually moving. As we hit the April production cycle, the shift from centralized LLM clusters to distributed edge inference is no longer a roadmap item—it’s the current deployment reality.

The Tech TL;DR:

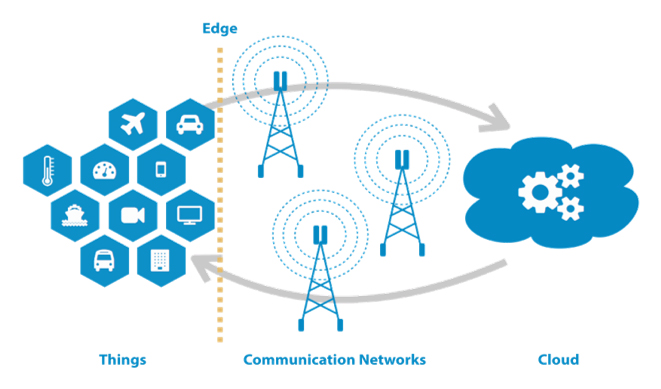

- Edge Shift: Inference is migrating from massive H100 clusters to localized NPUs to solve the “latency tax” and data sovereignty issues.

- Infrastructure Consolidation: The acquisition of Scale Computing by Acumera signals a pivot toward unified edge management over fragmented hyper-converged infrastructure (HCI).

- Hardware Bottleneck: The primary constraint has shifted from raw TFLOPS to thermal dissipation and power delivery at the far edge.

For years, the industry promised that “the edge” was where the magic happened, but the reality was usually just a glorified Linux box running a Docker container with high latency. The 2026 landscape is different. We are seeing a hard pivot toward deterministic latency. When you’re running AI-driven industrial automation or real-time medical diagnostics, a 200ms round-trip to a US-East-1 region isn’t just a bottleneck; it’s a system failure. This represents why the “Hottest Infrastructure” list is dominated by firms solving the orchestration of containerized workloads across heterogeneous hardware (ARM, RISC-V, and x86).

The architectural challenge is the “Last Mile” of AI. Deploying a model is uncomplicated; maintaining SOC 2 compliance and ensuring end-to-end encryption across 10,000 remote nodes is where most CTOs are currently failing. Many enterprises are finding their internal DevOps teams overwhelmed by the complexity of Kubernetes at the edge, leading to an urgent need for specialized Managed Service Providers (MSPs) who can handle the lifecycle management of distributed clusters without introducing security regressions.

The Tech Stack & Alternatives Matrix

To understand the 2026 AI 100, we have to look at the collision between traditional HCI (Hyper-Converged Infrastructure) and the fresh AI-native edge. The Acumera/Scale Computing merger is a prime example of the industry realizing that the “single pane of glass” for management is more valuable than the underlying hypervisor.

Edge Orchestration: The Contenders

When evaluating the infrastructure players on the CRN list, the competition generally breaks down into three architectural philosophies:

| Approach | Primary Focus | Trade-off | Key Player Example |

|---|---|---|---|

| Heavy Edge | Local GPU/NPU clusters; full stack on-site. | High CAPEX; thermal throttling risks. | NVIDIA EGX / Scale Computing |

| Distributed Mesh | Lightweight container orchestration; peer-to-peer. | Complexity in state synchronization. | K3s-based startups / Akamai |

| Hybrid Cloud-Sling | Local caching with cloud-bursting for heavy loads. | Dependency on WAN stability. | AWS Outposts / Azure Arc |

The “Heavy Edge” approach is winning in sectors where data privacy is non-negotiable. According to the official Kubernetes documentation regarding edge topologies, the move toward “K3s” and other lightweight distributions has allowed these firms to shrink the footprint of the control plane, freeing up more system resources for the actual model weights.

“The industry is moving away from ‘Cloud-First’ to ‘Cloud-Optional.’ The goal isn’t to avoid the cloud, but to ensure that the failure of a WAN link doesn’t stop a factory floor from operating its AI-driven quality control.” — Marcus Thorne, Lead Architect at EdgeScale Systems.

Solving the Deployment Bottleneck: From PR to Production

The marketing slides talk about “seamless integration,” but the reality is a nightmare of API versioning and driver mismatches. If you are deploying LLMs to the edge, you aren’t just managing software; you’re managing the hardware abstraction layer. To avoid the “vaporware” trap, developers are increasingly relying on WebAssembly (Wasm) to create portable, secure binaries that run regardless of the underlying SoC.

For those implementing these distributed systems, the first step is usually establishing a secure handshake between the edge node and the central registry. A typical deployment push via a CLI tool for an edge-optimized container would look like this:

# Deploying a quantized Llama-3.1-8B model to a remote edge node # Using a secure tunnel to bypass firewall restrictions edge-deploy --node-id edge-node-042 --image registry.internal/ai-edge/llama-quantized:v2.1 --env NPU_ACCELERATION=true --limit-mem 16Gi --health-check "/api/v1/ready" --cert-path /etc/ssl/certs/edge-auth.pem # Verify deployment and latency metrics curl -X Acquire https://edge-node-042.local/metrics | jq '.inference_latency' This level of granular control is necessary because “auto-scaling” at the edge is a myth. You have a fixed amount of silicon and a fixed thermal envelope. If your model exceeds the VRAM of the local NPU, you don’t scale up—you crash. This hardware-level fragility is why companies are now hiring infrastructure consultants to perform rigorous capacity planning before they commit to a specific hardware vendor from the CRN list.

The Security Blast Radius: Edge Vulnerabilities

Distributed infrastructure expands the attack surface exponentially. Every edge node is a potential entry point. Whereas Ars Technica has highlighted the rise of “physical access” attacks on edge hardware, the real threat is the software supply chain. A compromised container image pushed to 5,000 edge nodes is a catastrophic event.

To mitigate this, the industry is pivoting toward Zero Trust Architecture (ZTA) and mTLS (mutual TLS) for every single internal communication. Relying on a simple VPN is no longer sufficient. Enterprise IT departments are now deploying cybersecurity auditors and penetration testers to ensure that their edge orchestration layer doesn’t have a “god-mode” vulnerability that could allow an attacker to pivot from a single remote sensor to the core corporate data center.

“We are seeing a surge in ‘side-channel’ attacks targeting the NPU’s power consumption patterns to leak model weights. Encryption at rest is great, but encryption in use is the new frontier.” — Sarah Jenkins, Senior Researcher at the Open Security Foundation.

Looking at the CVE vulnerability database, the trend is clear: the most critical flaws are appearing in the management APIs of the exceptionally “simplified” edge platforms praised in press releases. The irony of the 2026 AI 100 is that the more “invisible” the infrastructure becomes, the more dangerous the underlying vulnerabilities are.

The trajectory is clear: the winners of the next three years won’t be the companies with the fastest chips, but those who can provide the most stable, secure, and observable orchestration layer. As we move toward a world of “ambient compute,” the ability to debug a failing node in a remote warehouse from a desk in San Francisco will be the ultimate competitive advantage. If your current stack can’t handle that, it’s time to stop reading the hype and start auditing your architecture.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.