The MCP Standard: Why Enterprise AI Finally Has a Secure Interface

The industry has spent the last twenty-four months hyping autonomous agents while ignoring the plumbing required to make them function. An AI model without secure, standardized access to enterprise data is just a expensive chatbot hallucinating in a vacuum. Anthropic’s Model Context Protocol (MCP) attempts to solve the integration bottleneck, but adoption isn’t about feature sets; it’s about reducing the attack surface when connecting LLMs to critical infrastructure. As we move into Q2 2026, the question isn’t whether to integrate agents, but how to do it without inviting a data exfiltration incident.

- The Tech TL;DR:

- MCP replaces fragile custom API wrappers with a standardized JSON-RPC interface for AI data access.

- Security posture improves by isolating agent permissions via dedicated servers rather than direct database credentials.

- Interoperability reduces vendor lock-in, allowing agents to switch underlying models without rewriting integration logic.

Enterprise adoption of AI agents has stalled not due to the fact that the models lack intelligence, but because the connection layer is brittle. Historically, engineering teams built custom integrations for every data source—Salesforce, Snowflake, internal wikis—creating a maintenance nightmare and inconsistent security policies. MCP abstracts this layer. It functions as a universal adapter, allowing clients to connect to any data source via a standardized protocol. This shifts the engineering burden from building unique connectors to maintaining secure MCP servers.

Architectural Shift: Custom Wrappers vs. Protocol Standardization

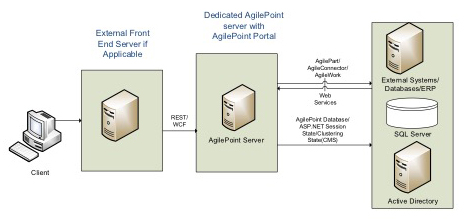

The legacy approach to AI integration involved hardcoding API keys and writing specific parsing logic for each data source. This introduced significant latency and security debt. MCP utilizes a client-server architecture where the AI model acts as the client and the data source exposes an MCP server. Communication typically occurs over stdio for local connections or SSE (Server-Sent Events) for remote connections, leveraging JSON-RPC 2.0 for message formatting.

From a latency perspective, local stdio connections introduce negligible overhead, often under 5ms per request, whereas remote SSE connections depend on network topology but eliminate the need for complex authentication handshakes per request. The protocol handles resource listing, prompt templates, and tool invocation uniformly. This standardization means engineering teams stop reinventing the wheel for every new SaaS integration.

| Feature | Legacy Custom Integration | Model Context Protocol (MCP) |

|---|---|---|

| Auth Mechanism | Hardcoded API Keys / OAuth per app | Centralized Server-Side Auth (OIDC/OAuth) |

| Latency Overhead | Variable (Parsing logic bottlenecks) | Consistent (JSON-RPC standard) |

| Maintenance | High (Breaks on API updates) | Low (Server updates isolate changes) |

| Security Scope | Broad (Agent often has full DB access) | Granular (Server enforces resource limits) |

Security teams are rightfully cautious. Connecting an LLM to internal databases creates a new vector for prompt injection and data leakage. MCP mitigates this by ensuring the AI model never sees the underlying database credentials. The MCP server acts as a gatekeeper, enforcing access controls before any data reaches the context window. Yet, configuring these servers requires rigorous validation. Organizations scaling this architecture should engage cybersecurity auditors to review MCP server configurations, ensuring that tool definitions don’t inadvertently expose write capabilities to read-only agents.

The Security Imperative and Compliance Reality

The hiring trends in 2026 reflect the urgency of this security layer. Major financial institutions like Visa are actively recruiting for Sr. Director, AI Security roles, signaling that payment processors view AI integration as a critical risk domain. Similarly, Microsoft’s AI division is staffing up for Director of Security positions specifically focused on AI infrastructure. This isn’t just about model safety; it’s about securing the pipes.

MCP’s design philosophy aligns with zero-trust principles. The agent requests resources, but the server decides what to grant. This separation of concerns is vital for SOC 2 compliance. If an agent is compromised, the blast radius is limited to the specific MCP server’s permissions rather than the entire enterprise network. Nevertheless, implementation errors remain a risk. A misconfigured MCP server could expose sensitive endpoints to unauthorized agents.

“Standardization reduces the surface area for errors, but it concentrates risk on the server implementation. You need to treat an MCP server with the same security rigor as a public-facing API gateway.” — Senior Security Architect, AI Cyber Authority

For enterprises navigating this transition, the focus must shift from model selection to infrastructure hardening. Relying solely on vendor promises is insufficient. IT departments should partner with specialized managed service providers who understand the nuances of AI orchestration layers. These partners can assist in deploying monitoring tools that track MCP token usage and detect anomalous data access patterns.

Implementation: Deploying a Secure MCP Server

Developers need to move beyond theoretical adoption and start shipping secure implementations. Below is a baseline example of initializing an MCP server using Python, ensuring that resource access is explicitly defined rather than implicitly granted. This snippet demonstrates the strict typing and resource registration required to maintain control.

from mcp.server.fastmcp import FastMCP # Initialize the MCP server with strict naming mcp = FastMCP("SecureDataConnector") @mcp.resource("db://customers/{id}") def get_customer(customer_id: str) -> str: """ Retrieve customer data. Access controlled via server-side auth middleware. """ # Simulated DB call with injected validation if not customer_id.startswith("CUST-"): raise ValueError("Invalid ID format") return fetch_from_secure_vault(customer_id) @mcp.tool() def calculate_churn risk(score: float) -> float: """ Tool exposed to AI agent. No direct DB write access granted. """ return score * 0.85 if __name__ == "__main__": mcp.run() This code illustrates the principle of least privilege. The agent can only access the get_customer resource if the server allows it, and the calculate_churn_risk tool is read-only. There is no direct database connection string exposed to the client. To maintain this posture in production, teams should integrate software development agencies specializing in AI security to conduct code reviews specifically for MCP server logic.

Ecosystem Velocity and Vendor Lock-in

The value of MCP compounds as more providers adopt the standard. Currently, the ecosystem is fragmented, but the trajectory points toward MCP becoming the TCP/IP of AI agent communication. Early adopters gain a strategic advantage by decoupling their data layer from specific model providers. If a better model emerges next quarter, swapping the client requires minimal engineering effort because the data interface remains constant.

However, skepticism is warranted. Standards often stall when major players decide to build walled gardens. Monitoring the commitment of hyperscalers to open protocols is essential. For now, MCP offers the most viable path to interoperable, secure AI agents. The technology is shipping, the specs are public on GitHub, and the security model holds up under scrutiny.

As we push these systems into production, the focus must remain on the integrity of the connection layer. AI agents will become ubiquitous, but only those built on secure, standardized protocols like MCP will survive the inevitable regulatory crackdowns coming in late 2026. The infrastructure must be ready before the agents are unleashed.

Disclaimer: The technical analyses and security protocols detailed in this article are for informational purposes only. Always consult with certified IT and cybersecurity professionals before altering enterprise networks or handling sensitive data.