SANTA CLARA, CA – NVIDIA marked two decades of its CUDA platform at GTC 2026, while simultaneously showcasing advancements across quantum computing and data processing, signaling a broadening strategy beyond its traditional graphics processing focus. The celebration of CUDA’s 20th anniversary highlighted the platform’s evolution from a niche parallel computing bet to a foundational technology for modern AI and scientific computing, now utilized by over 6 million developers.

At a panel discussion Wednesday, NVIDIA CUDA Architect Stephen Jones, alongside researchers from Jump Trading, Meta Superintelligence Labs, and NVIDIA, reflected on CUDA’s origins. Paulius Micikevicius, a software engineer at Meta Superintelligence Labs, recalled the initial struggle to gain acceptance for GPU utilization, stating that they “had to move and beg them to consider using GPUs.”

The early challenges in adopting GPU technology were vividly illustrated by Wen-Mei Hwu, senior distinguished research scientist and senior research director at NVIDIA, who recounted building a 200-GPU system at the University of Illinois Urbana-Champaign. Faced with a delivery of GPU boards without a chassis, Hwu and his students constructed a system using wooden frames. This makeshift system achieved the No. 3 ranking on the Green500 benchmark, demonstrating the energy efficiency potential of GPUs.

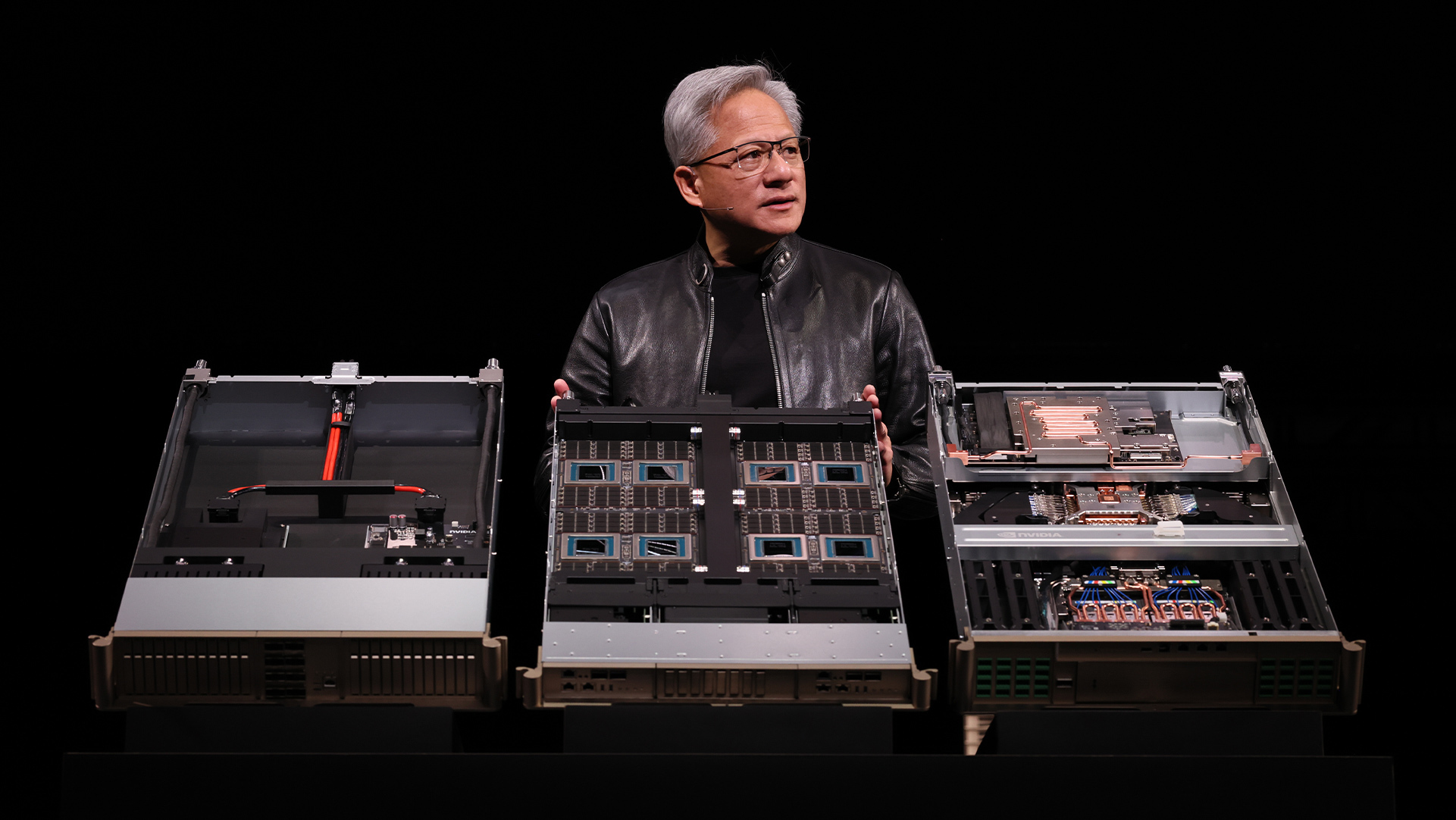

NVIDIA is positioning desktop AI systems, such as the DGX Spark, as crucial for prototyping and early development, according to Kate Clark, distinguished devtech engineer at NVIDIA. “As long as you have that capability to do that initial exploration and something that fits on your desk or your lap, that’s the critical thing,” Clark said, adding that CUDA’s presence will remain pervasive.

Beyond CUDA’s anniversary, NVIDIA unveiled advancements in data processing with its cuDF and cuVS libraries. These libraries, built on CUDA-X, are being adopted by leading data platforms to accelerate performance for both structured and unstructured data, delivering up to 5x faster processing speeds while reducing costs. Google Cloud, Snap, IBM, and Dell are among the companies integrating cuDF and cuVS into their platforms.

Snap, serving over 946 million active users, reported a 76% reduction in daily data processing costs using cuDF on Google Kubernetes Engine (GKE), enabling the analysis of 10 petabytes of data within a three-hour window. Saral Jain, chief information officer of Snap, stated that the collaboration with NVIDIA and Google Cloud “helps us innovate faster for more than a billion Snapchatters worldwide.”

Nestlé, in early experiments with its Order-to-Cash mart using IBM watsonx.data and NVIDIA cuDF, achieved five times faster workload performance with 83% cost savings. Chris Wright, chief information and digital officer of Nestlé, noted the potential to “refresh global operations data in a few minutes and at reduced cost.” Dell’s AI Data Platform, also featuring accelerated data engines, aims to make multimodal data AI-ready in hours rather than days, according to chairman and CEO Michael Dell.

NVIDIA also announced the public availability of NVQLink, a low-latency, high-throughput connectivity solution for quantum processors and GPU supercomputing, through a new API called cudaq-realtime. Dell is among the first to offer product offerings based on NVQLink. Leading U.S. National labs, including Pacific Northwest National Laboratory and Lawrence Berkeley National Laboratory, as well as quantum computing companies Quantinuum, Infleqtion, and Q-CTRL, have adopted NVQLink, reporting significant reductions in decoding and calibration latencies. Anyon Computing and SDT have unveiled an NVQLink quantum-GPU system at a Korean commercial data center.

NVIDIA launched cuEST, a new CUDA-X library designed to accelerate quantum chemistry calculations for semiconductor design. Initial adopters include Applied Materials, Samsung, Synopsys, and TSMC. The library aims to address the computational bottleneck in simulating the quantum mechanics of next-generation chip designs, enabling more efficient material modeling and accelerating semiconductor innovation.

Leave a Reply